A new artificial intelligence feature from Grammarly, designed to offer writing advice "inspired by" subject matter experts, has ignited controversy due to its apparent utilization of individuals’ identities and published works without explicit consent. The tool, branded as "Expert Review," generates suggestions that mimic the editorial styles and insights of prominent figures, including journalists, authors, and academics, raising significant ethical and legal questions about data usage and intellectual property in the age of AI.

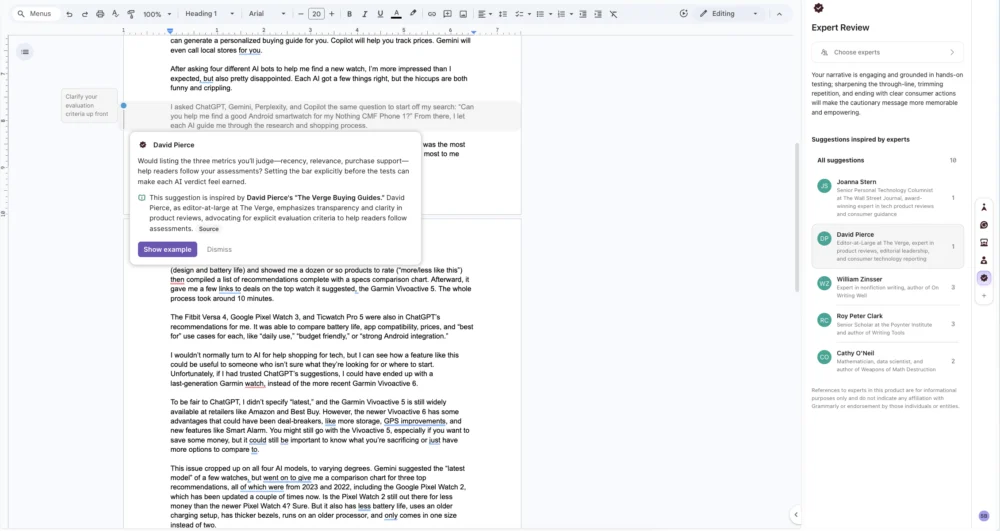

The "Expert Review" feature, launched in August, purports to enhance users’ writing by filtering it through the "lens of industry-relevant perspectives." Upon activation within the Grammarly sidebar, the AI analyzes user-generated text and then presents AI-generated feedback, purportedly drawing inspiration from a curated list of experts. This roster includes well-known personalities such as authors Stephen King and Neil deGrasse Tyson, and the late astrophysicist Carl Sagan, among others. The stated aim is to provide users with a deeper understanding of their work by offering insights that echo the expertise of these influential figures.

However, initial investigations and user experiences have revealed a more complex and concerning reality. A notable incident involved an individual discovering AI-generated comments in their writing that bore striking resemblances to the editorial voice of Nilay Patel, editor-in-chief of The Verge, along with other senior editorial staff from the publication, including David Pierce, Sean Hollister, and Tom Warren. Crucially, these individuals reportedly had not granted Grammarly permission to incorporate their professional identities or writing styles into the "Expert Review" feature. This discovery points to a potential appropriation of personal brand and intellectual output, without the knowledge or consent of the individuals concerned.

Further examination of the feature has brought to light that numerous other tech journalists and writers have been similarly identified within the AI’s purported expert pool. This includes former Verge editors such as Casey Newton and Joanna Stern, former Verge writer Monica Chin, Lauren Goode of Wired, Mark Gurman and Jason Schreier of Bloomberg, Kashmir Hill of The New York Times, Kaitlyn Tiffany of The Atlantic, Wes Fenlon of PC Gamer, Raymond Wong of Gizmodo, Richard Leadbetter, founder of Digital Foundry, Mark Spoonauer, editor-in-chief of Tom’s Guide, Katharine Castle, former editor-in-chief of Rock Paper Shotgun, and Kat Bailey, former news director at IGN. The inclusion of these individuals raises a critical concern: the potential for Grammarly to leverage their public personas and published content to enhance its AI offerings without proper attribution or authorization.

Adding to the unease, the descriptive metadata associated with some of these "experts" within the feature has been found to contain inaccuracies, such as outdated job titles. This suggests a lack of direct consultation or verification with the individuals themselves. Had Grammarly sought permission, it is reasonable to assume that such factual discrepancies could have been easily rectified, lending further weight to the argument that the company opted for a less transparent approach.

In response to these allegations, Grammarly, under its parent company Superhuman, has issued a statement. Alex Gay, vice president of product and corporate marketing at Superhuman, stated, "The Expert Review agent doesn’t claim endorsement or direct participation from those experts; it provides suggestions inspired by works of experts and points users toward influential voices whose scholarship they can then explore more deeply." This explanation frames the feature as an AI’s interpretation of publicly available works, rather than a direct impersonation or endorsement.

However, when pressed on whether Superhuman considered notifying or obtaining permission from the individuals named in the AI feature, Gay reiterated, "The experts in Expert Review appear because their published works are publicly available and widely cited." This rationale hinges on the interpretation that publicly accessible content grants implicit permission for AI models to analyze and synthesize it for derivative applications, a stance that is likely to be legally and ethically contested.

The practical implementation of the "Expert Review" feature has also been found wanting, further complicating Grammarly’s defense. The feature has reportedly been prone to frequent crashes, hindering users’ ability to effectively "explore more deeply" the works of the supposed experts. Moreover, the "sources" linked by the AI have often led to spammy replicas of legitimate websites or outdated archived copies, rather than the original source material. This undermines the feature’s purported educational value and raises questions about the integrity of the AI’s data sourcing and citation mechanisms.

In some instances, the AI has even directed users to unrelated links that were not authored by the person whose work was supposedly being referenced. This suggests a potential for significant errors in attribution, where suggestions presented under one expert’s name might actually be derived from the work of another. Such a scenario, if true, indicates a fundamental flaw in the AI’s ability to accurately associate content with its original creators, even when presented with the user’s option to view more details and sources.

The user interface of the "Expert Review" feature also contributes to the potential for misinterpretation. Within applications like Google Docs, the AI-generated suggestions are designed to visually mimic comments from real users. This creates an experience that closely resembles receiving direct editorial feedback from a human editor, potentially blurring the lines between AI-generated advice and personalized human critique. This visual parity could mislead users into believing they are receiving insights directly from the named expert, rather than an AI’s interpretation.

For example, a suggestion purportedly "inspired by" The Verge’s senior editor Sean Hollister advised adding a parenthetical to provide context that was already present elsewhere in the text. The author of this piece noted that the real Sean Hollister’s editing style typically favors conciseness and the avoidance of redundant explanations. Acting on the AI’s suggestion and presenting it to the actual Sean Hollister would likely result in its removal, highlighting the AI’s inability to truly replicate nuanced human editorial judgment, even when informed by an extensive corpus of an individual’s published work. The ability of an AI to ingest and mimic writing styles is one aspect; replicating the underlying decision-making process and editorial philosophy is an entirely different and far more complex challenge.

The broader implications of Grammarly’s "Expert Review" feature extend beyond this specific instance. The rapid advancement of AI technologies, particularly in natural language processing and content generation, presents a growing challenge for intellectual property rights and personal identity protection. As AI models become more sophisticated in their ability to analyze, synthesize, and replicate human-generated content, the question of ownership, attribution, and consent becomes increasingly paramount.

This situation underscores a critical need for greater transparency and ethical guidelines in the development and deployment of AI tools. Users and creators alike must be aware of how their data is being used, how AI models are trained, and what the limitations and potential misrepresentations of AI-generated content might be. The "Expert Review" controversy serves as a potent reminder that while AI can offer powerful new functionalities, it must be developed and implemented with a profound respect for individual rights and intellectual property.

The legal framework surrounding AI-generated content and the use of personal data is still in its nascent stages. Cases like this will likely inform future legislation and court decisions concerning copyright, defamation, and the right to privacy in the digital age. The core issue revolves around whether analyzing and synthesizing publicly available works for AI training constitutes fair use or infringement, and whether presenting AI-generated outputs in a manner that mimics specific individuals’ styles and identities without their explicit consent crosses an ethical and legal threshold.

Moving forward, companies developing AI tools that leverage existing content and public figures’ likenesses will face increased scrutiny. A proactive approach involving clear consent mechanisms, transparent data sourcing policies, and robust attribution practices will be crucial for building trust and avoiding potential legal repercussions. The promise of AI lies in its ability to augment human capabilities, not to appropriate and repurpose human identity and creative output without due process. The conversation initiated by Grammarly’s "Expert Review" feature is a vital step in defining the responsible future of artificial intelligence in creative and professional domains.