A critical security vulnerability has come to light, revealing that previously innocuous Google API keys, widely embedded in publicly accessible client-side code for routine services, now inadvertently serve as authentication credentials for Google’s advanced Gemini AI assistant, thereby exposing private data and content to unauthorized access.

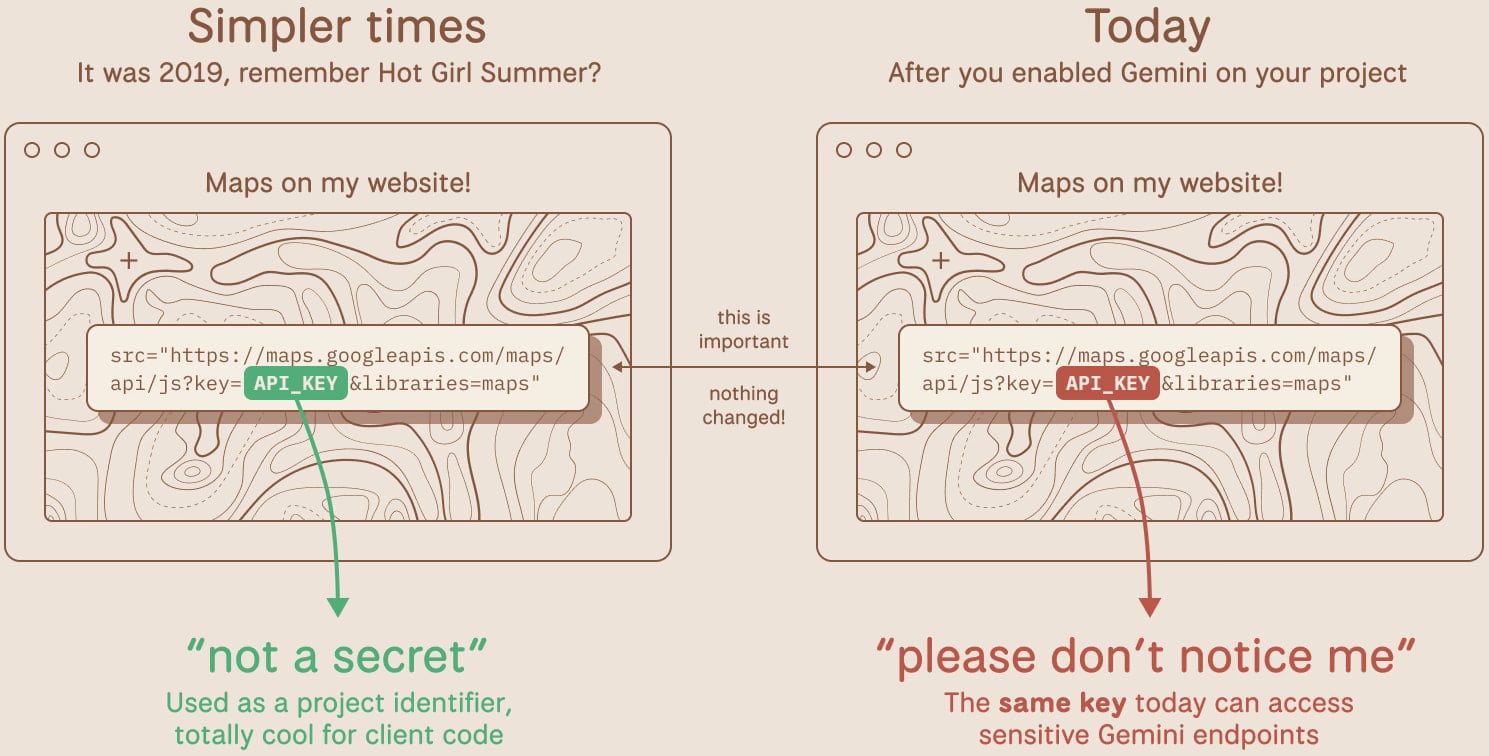

The discovery, made by cybersecurity researchers, highlights a significant shift in the security posture of Google Cloud API keys. Historically, keys used for services like Google Maps, YouTube embeds, or Firebase analytics were often considered mere identifiers, permissible for public exposure without posing substantial risk to sensitive internal systems. Their primary function was to track usage and authenticate requests against specific, limited services. However, the introduction of Google’s powerful Gemini large language model (LLM) and its associated API has fundamentally altered this landscape. With Gemini’s integration, these same API keys, without any explicit change in their configuration or developer awareness, suddenly acquired elevated privileges, enabling them to authenticate and interact with the Gemini AI service and access potentially sensitive information or generate content at the owner’s expense.

Researchers at TruffleSecurity spearheaded the investigation, conducting extensive scans of internet pages to identify exposed Google API keys. Their analysis, which included scanning the November 2025 Common Crawl dataset—a comprehensive snapshot of a vast array of popular websites—uncovered a staggering 2,800 live Google API keys publicly embedded within the source code of various organizations. This widespread exposure spanned diverse sectors, including major financial institutions, cybersecurity firms, and recruiting agencies, underscoring the pervasive nature of the problem. Alarmingly, even internal Google infrastructure was found to harbor such exposed keys, demonstrating the broad systemic challenge.

The core of the issue lies in a fundamental redefinition of API key sensitivity. Prior to Gemini, many developers operated under the assumption that certain Google Cloud API keys, particularly those designed for client-side functionality, were not secrets and could be safely exposed. This perception was reinforced by the limited scope of the services they authenticated. However, when developers began enabling the Generative Language API (Gemini) within their Google Cloud projects, these existing, publicly exposed keys silently gained the power to authenticate to the new, more sensitive AI service. This silent privilege escalation means that an attacker could simply inspect a website’s page source, extract an exposed API key, and then leverage it to make unauthorized calls to the Gemini API, potentially accessing private data, generating content, or executing other functions at the expense of the legitimate account holder.

The financial implications of such an exploit are considerable. The use of the Gemini API is not a free service, and malicious actors could exploit exposed keys to rack up substantial charges on the victim’s account. TruffleSecurity’s analysis indicated that a threat actor aggressively utilizing API calls could incur thousands of dollars in charges daily on a single compromised account, depending on the specific AI model used and the context window requested. This financial burden, coupled with the potential for data exfiltration or manipulation, presents a dual threat to organizations.

What makes this vulnerability particularly insidious is its long-standing presence. Many of these API keys have been openly exposed in public JavaScript code for years, dormant but now suddenly endowed with dangerous capabilities without explicit notification or configuration changes. This "sleeping giant" scenario highlights a critical gap in security protocols: the need for continuous reassessment of asset sensitivity, especially in rapidly evolving technological landscapes like artificial intelligence. A key that was once merely an identifier for a maps service now acts as a gateway to powerful AI models, underscoring the dynamic nature of threat modeling.

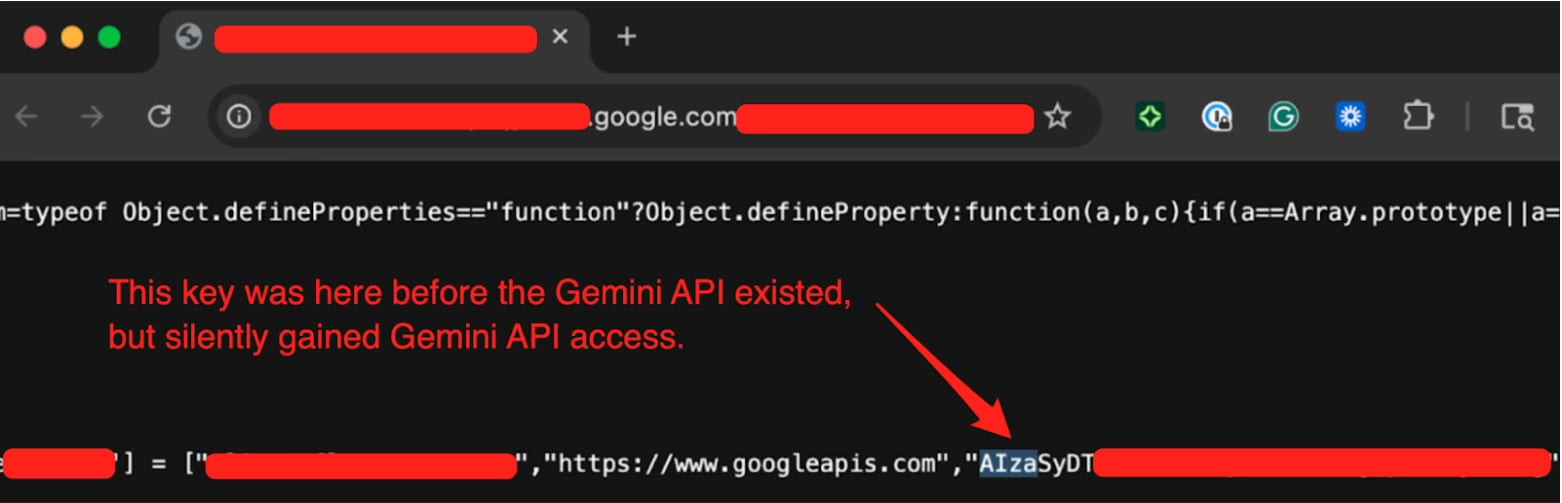

TruffleSecurity provided concrete evidence to Google, including samples from Google’s own infrastructure. One notable example involved an API key that had been publicly embedded in the page source of a Google product’s public-facing website since at least February 2023. The researchers successfully tested this key by calling the Gemini API’s /models endpoint, confirming its ability to list available AI models and thus demonstrating unauthorized access to the Gemini service.

The timeline of Google’s response indicates a collaborative effort to address the issue. TruffleSecurity initially reported the problem to Google on November 21st of the previous year. Following a series of exchanges, Google officially classified the flaw as a "single-service privilege escalation" on January 13th, 2026. In a statement, Google acknowledged the report and confirmed that it had "worked with the researchers to address the issue." The company further stated that it has already implemented proactive measures, including mechanisms to detect and block leaked API keys attempting to access the Gemini API. Future initiatives include setting new AI Studio keys to default to a Gemini-only scope, thereby limiting their potential for broader access, and sending proactive notifications when API key leaks are detected.

This incident serves as a crucial reminder of the evolving challenges in cloud security and developer best practices. API keys, fundamentally, are credentials, and like all credentials, they require robust management and protection. The principle of "least privilege" dictates that keys should only have the minimum permissions necessary for their intended function. The shift in Google API key sensitivity underscores the importance of this principle, especially when new services or capabilities are introduced that might inherit permissions from existing, less secure configurations.

From a developer’s perspective, immediate action is warranted. Organizations are strongly advised to audit all API keys across their environments, particularly those exposed in public client-side code, to determine if the Generative Language API (Gemini) is enabled for the associated projects. Any publicly exposed keys with Gemini access should be rotated immediately and replaced with properly secured credentials. Furthermore, implementing secure secret management practices, such as using environment variables, dedicated secret management services, or server-side proxies for API calls, is paramount to prevent future exposure. Tools like TruffleHog, an open-source solution developed by TruffleSecurity, can assist developers in proactively detecting live, exposed keys within their codebases and repositories.

Beyond immediate remediation, this event highlights the broader implications for software supply chain security and the shared responsibility model in cloud computing. While cloud providers like Google secure the underlying infrastructure, the security of applications and data within that infrastructure remains a shared responsibility with the customer. Misconfigurations, such as publicly exposing API keys, fall squarely within the customer’s domain. As AI capabilities become increasingly integrated into enterprise applications, developers must adopt a more stringent security mindset, conducting thorough threat modeling for any new service integration and rigorously evaluating the potential for privilege escalation from seemingly benign components.

The future outlook suggests that such vulnerabilities will likely become more prevalent as AI models are integrated into a wider array of existing systems. Organizations must anticipate that the sensitivity of various digital assets can change dynamically with technological advancements. Continuous monitoring, proactive threat intelligence, and a culture of security-first development are no longer optional but essential for navigating the complex and rapidly evolving cybersecurity landscape, especially with the accelerated adoption of artificial intelligence. Moreover, regulatory and compliance frameworks, such as GDPR, CCPA, and HIPAA, will increasingly scrutinize how organizations manage access to powerful AI systems and the data they process, making robust API key management a critical aspect of compliance. The Google API key incident is a stark illustration of how seemingly minor oversight can rapidly escalate into a significant security and financial risk in the era of pervasive AI.