In a significant stride towards enhancing adolescent online safety, Meta Platforms is introducing a new feature on Instagram designed to alert parents when their teenage children exhibit patterns of searching for content related to self-harm and suicide. This initiative represents a pivotal development in the ongoing efforts by social media companies to address the complex challenges of mental well-being among young users, aiming to bridge the gap between potential distress signals and parental intervention.

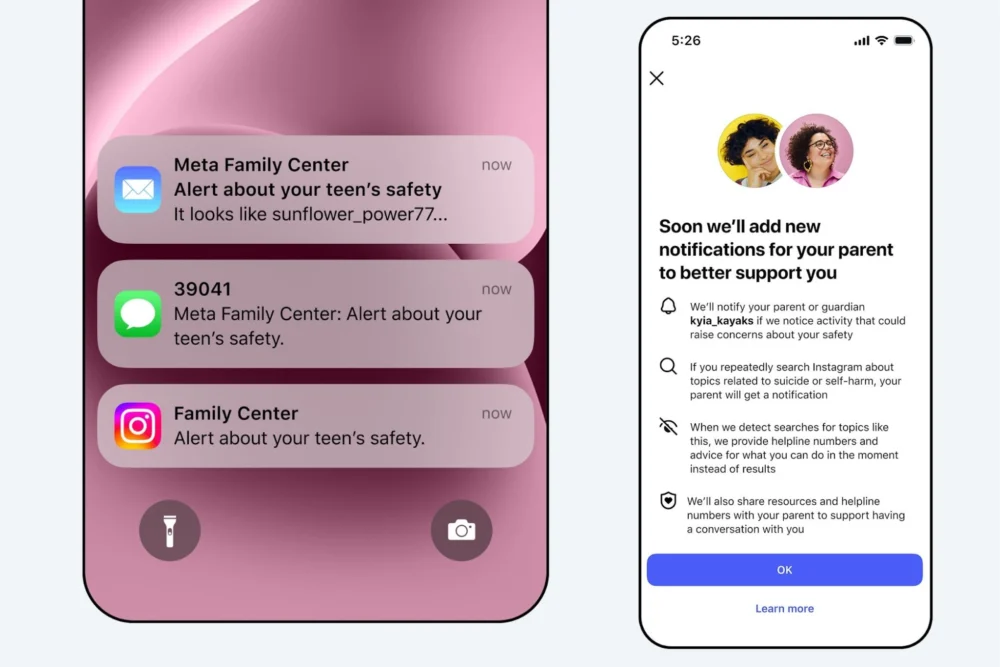

The new functionality, set to roll out progressively across the United States, United Kingdom, Australia, and Canada, will trigger notifications to parents if a teenager repeatedly searches for terms explicitly associated with suicide or self-harm within a condensed timeframe. This opt-in feature, integrated into Instagram’s existing parental supervision tools, underscores Meta’s commitment to fostering a more supportive digital environment for minors. The company has indicated that the system’s reach will expand to other global regions later in the year, signaling a broader strategic focus on mental health support within its digital ecosystems.

Meta’s announcement highlights that the vast majority of teenagers do not actively seek out such sensitive content on Instagram. When such searches do occur, the platform’s established policy is to block access to harmful material and instead direct users to vital resources and helplines offering immediate support. The introduction of parental alerts is framed as an empowerment tool, designed to equip parents with timely information that may indicate a need for proactive engagement with their children. The platform emphasizes a measured approach, aiming to avoid an excessive volume of notifications that could diminish their effectiveness, thereby ensuring that alerts are meaningful and actionable.

This proactive measure arrives at a critical juncture, as concerns surrounding the impact of social media on youth mental health continue to grow. Experts in adolescent psychology and digital safety have long advocated for more robust mechanisms that can identify and flag potential signs of distress among young people who spend significant portions of their lives navigating online platforms. The "repeatedly tries to search" threshold is a crucial element, suggesting a focus on behavioral patterns rather than isolated incidents, which could help distinguish between curiosity, educational exploration, and genuine expressions of struggle.

The implementation of such a feature raises several important considerations. Firstly, the efficacy of these alerts will depend heavily on the accuracy of Meta’s algorithms in identifying relevant search terms while minimizing false positives. False alarms could lead to parental anxiety and potentially strain parent-child relationships, while missed signals could have devastating consequences. Secondly, the opt-in nature of the feature means that its reach will be limited to families who are already actively engaged with parental controls. Outreach and education will be crucial to encourage wider adoption among parents who may be less tech-savvy or unaware of the potential risks their children face online.

Beyond the immediate notification, the accompanying resources offered to parents are equally vital. The aim is not merely to inform but to guide. These resources are expected to provide frameworks for initiating sensitive conversations, understanding the nuances of adolescent mental health, and identifying appropriate professional support. This holistic approach acknowledges that a digital alert is only the first step; the subsequent human connection and intervention are paramount.

The context of this development also includes broader technological trends. Meta’s concurrent announcement of a similar alert system for its AI chatbots later this year suggests a company-wide strategic pivot towards integrating safety and well-being features across its diverse product portfolio. As AI becomes increasingly sophisticated and integrated into user experiences, the need for ethical guardrails and supportive functionalities becomes even more pronounced. The potential for AI to inadvertently expose vulnerable users to harmful content, or to generate responses that exacerbate distress, necessitates robust monitoring and intervention mechanisms.

Looking ahead, the success of Instagram’s parental alert system will likely be evaluated based on several key metrics: adoption rates, the perceived usefulness by parents, and, most importantly, its impact on reducing instances of self-harm and suicide among teenage users. Longitudinal studies will be necessary to ascertain whether these alerts translate into tangible positive outcomes for young people’s mental health. Furthermore, the evolution of these features will need to keep pace with the ever-changing landscape of online behavior and emerging digital threats.

The introduction of these proactive parental safeguards on Instagram represents a significant, albeit nascent, step in the ongoing dialogue about social media’s role in adolescent mental health. It signifies a shift from reactive content moderation to a more preventative and supportive model, empowering parents with tools to better safeguard their children in the digital realm. As this feature rolls out, its implementation and impact will be closely watched by parents, policymakers, mental health advocates, and the broader technology industry, offering valuable insights into the future of online child safety. The challenge now lies in ensuring that these technological interventions are complemented by comprehensive mental health support systems and a sustained societal commitment to the well-being of young people in an increasingly digital world.