The proliferation of advanced artificial intelligence tools has profoundly reshaped digital landscapes, transitioning from novelties to indispensable components of modern enterprise and individual productivity. These sophisticated platforms, encompassing large language models, code generators, and research assistants, are now deeply embedded across diverse sectors, handling sensitive corporate data, proprietary codebases, and critical research materials. This pervasive integration, while boosting efficiency, has simultaneously catalyzed the emergence of a clandestine economy where premium AI access is traded as a highly sought-after underground asset, posing significant new challenges for cybersecurity.

The Ubiquity and Operational Criticality of AI

Over the past few years, AI applications like conversational agents, intelligent assistants, and content generation platforms have become foundational to daily operations for individuals and organizations alike. From automating customer service and optimizing supply chains to facilitating complex software development and scientific research, these tools are no longer merely convenient; they are operationally critical. Businesses leverage them to parse internal documents, synthesize vast amounts of data, and streamline creative processes, inadvertently exposing potentially sensitive information to these powerful, yet sometimes vulnerable, systems. The deep embedding of AI into workflows means that disruption or unauthorized access can have cascading effects, impacting productivity, data security, and competitive advantage.

The Dark Web’s New Frontier: Premium AI Access

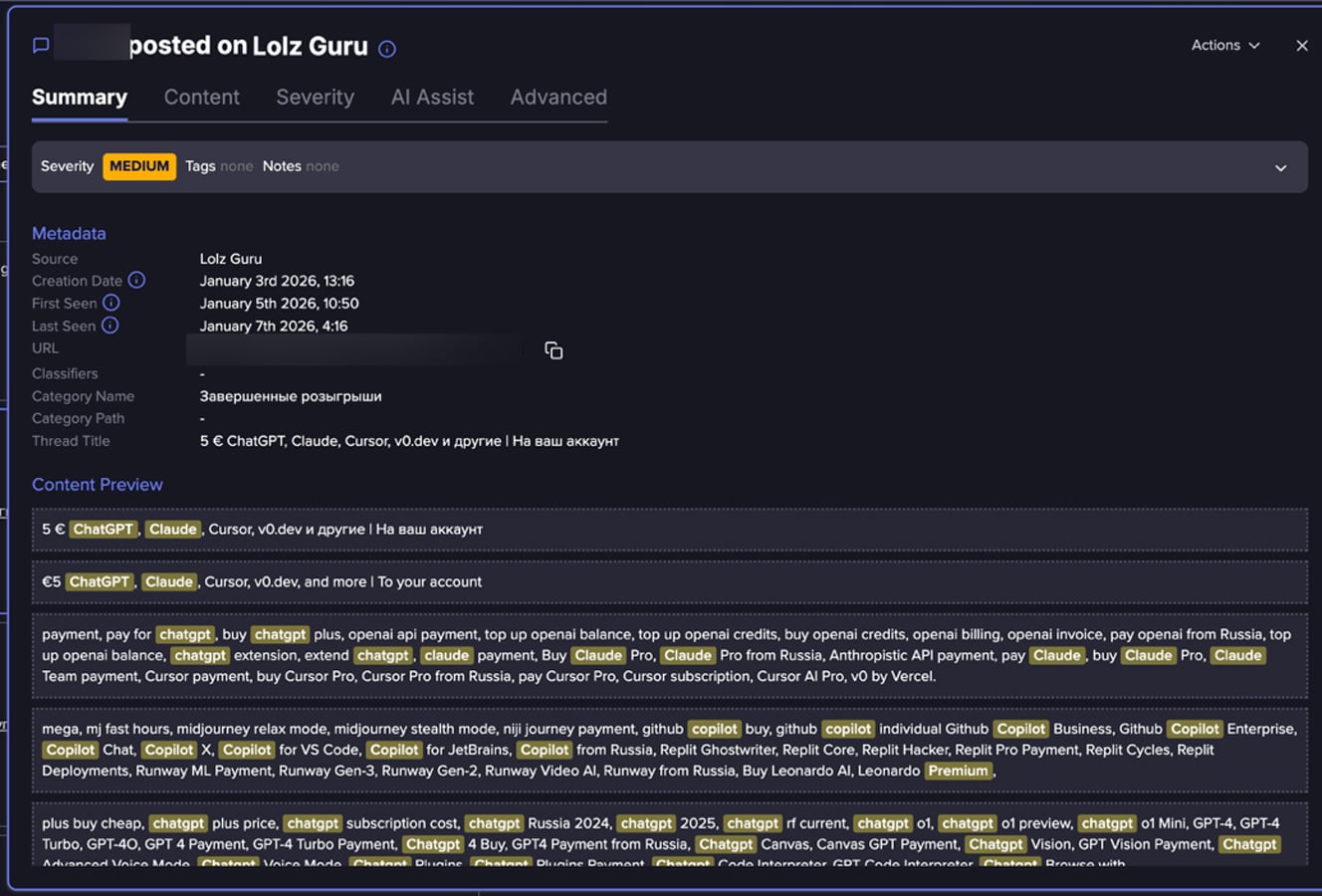

As the intrinsic value of AI services escalates, so too does their allure within the cybercrime ecosystem. Access to high-tier AI models significantly reduces the overhead for malicious actors, enhancing the quality of their illicit outputs and accelerating operations that once demanded specialized human expertise or considerable time investments. Recent analyses conducted by cybersecurity intelligence firms reveal a burgeoning underground marketplace dedicated to the illicit trade of premium AI platform access. This isn’t merely an aggregation of isolated instances of account compromise; it signifies a structured, recurring pattern of commercial exchange, where access to these powerful digital services is advertised, bundled, and resold. These offerings frequently feature discounted subscriptions, combined access to multiple AI platforms, or claims of bypassing typical usage limitations, indicating a sophisticated market mirroring legitimate digital service economies.

Mechanisms of Illicit Acquisition

While direct evidence detailing the acquisition methods within these underground forums is often obscured, patterns in the observed data suggest several probable pathways for threat actors to obtain AI accounts:

- Credential Compromise: This remains a primary vector. Phishing campaigns, malware designed to steal credentials, or data breaches exposing login information for various online services can grant unauthorized access to AI accounts. Given the widespread reuse of passwords, a compromise on one platform can often lead to unauthorized access on others, including premium AI services.

- Exploitation of Vulnerabilities: Software vulnerabilities within AI platforms or their associated infrastructure could be exploited to bypass authentication mechanisms or create unauthorized accounts.

- Abuse of Free Trials and Promotional Offers: Threat actors may systematically register for numerous free trials or leverage promotional offers using stolen or fabricated identities, then resell the temporary access before it expires. This method allows for large-scale provisioning of short-term, illicit access.

- Insider Threats: Disgruntled employees or individuals with legitimate access to corporate AI accounts could deliberately or inadvertently leak credentials or provide access to external parties.

- Automated Account Creation and Policy Evasion: Sophisticated bots or scripts could be employed to create accounts at scale, potentially circumventing registration limits or identity verification processes, especially when combined with stolen personal data.

Collectively, these methods point to a complex interplay of direct account compromise, large-scale automated provisioning, and systematic policy abuse, highlighting the diverse tactics employed by those seeking to profit from illicit AI access.

The Lure of the Underground Market: Why Buyers Engage

The burgeoning demand for illicit AI access stems from several compelling factors for potential buyers:

- Cost Evasion: Premium AI subscriptions can be expensive, particularly for advanced models or high-volume usage. The underground market offers significantly discounted rates, making powerful tools accessible to individuals or groups with limited budgets.

- Anonymity and Deniability: Engaging with legitimate AI services often requires personal identification, payment information, and adherence to terms of service. Illicit accounts offer a layer of anonymity, shielding users from direct accountability for their actions.

- Bypassing Restrictions and Acceptable Use Policies: Official AI platforms implement content filters, rate limits, and policies designed to prevent misuse. Illicitly acquired accounts or modified access often promise to circumvent these restrictions, allowing users to generate content or perform tasks that would otherwise be prohibited.

- Facilitating Malicious Activities: For cybercriminals, direct access to AI tools through legitimate channels carries the risk of detection and potential reporting. Using compromised accounts provides a safer, untraceable avenue for leveraging AI in their schemes.

Weaponizing AI: Criminal Applications and Escalating Threats

Access to sophisticated AI platforms empowers threat actors to execute a wide array of illicit activities, many extending far beyond simple misuse of the services themselves.

- Sophisticated Phishing and Social Engineering: Generative AI tools are a game-changer for producing highly convincing phishing messages, elaborate scam scripts, and multilingual social engineering content at unprecedented scale. AI-generated text can mimic human communication patterns, improve grammatical accuracy, and tailor messages to specific targets, significantly enhancing the realism and effectiveness of fraudulent communications. Law enforcement agencies, such as Europol, have highlighted how criminal groups are leveraging generative AI to automate and scale phishing and fraud operations, creating content with greater speed and sophistication than previously imagined.

- Personalized Deception Campaigns: Attackers are using AI to craft hyper-personalized social engineering campaigns. By analyzing publicly available information or stolen data, AI can generate malicious messages that resonate deeply with individual targets, exploiting their interests, connections, or vulnerabilities with surgical precision.

- Malware Development and Evasion: While not explicitly covered in the original data, a logical extension of AI’s coding capabilities is its potential use in generating malicious code, developing polymorphic malware to evade detection, or assisting in vulnerability research. AI can help refine exploits, write shellcode, or even generate entire malware strains, lowering the technical bar for aspiring cybercriminals.

- Automation of Illicit Workflows: Beyond content generation, AI can automate repetitive tasks inherent in cybercrime, such as identifying vulnerable targets, reconnaissance, or orchestrating multi-stage attacks. This increases the efficiency and scalability of criminal operations.

- Synthetic Media for Impersonation and Deception (Deepfakes): Many advanced AI platforms include capabilities for generating highly realistic images, audio, or video. Threat actors can exploit these features to create synthetic content (deepfakes) for sophisticated impersonation scams, extortion, or disinformation campaigns. Imagine AI-generated audio mimicking a CEO’s voice to authorize fraudulent financial transfers, or video deepfakes used in blackmail schemes.

- Information Operations and Disinformation: AI can produce vast quantities of propaganda, fake news articles, or social media content designed to manipulate public opinion, sow discord, or amplify specific narratives, often with a level of linguistic nuance that makes it difficult to discern from human-generated content.

The Emerging Underground Market for AI Accounts: An Ecosystem View

The findings from cybersecurity researchers underscore that threat actors and illicit sellers now perceive AI accounts as a valuable black-market commodity. These accounts are being seamlessly integrated into the existing cybercrime ecosystem, which historically trades in compromised credentials, stolen identities, and illicit digital services. Offers for AI access frequently appear alongside listings for stolen email accounts, developer tools, Remote Desktop Protocol (RDP) access, Virtual Private Servers (VPS), and verification infrastructure, signaling AI’s established position within the criminal "IT stack."

The analysis reveals a variety of AI-related offerings, ranging from straightforward resales of premium subscriptions to more ambiguous claims of unrestricted or extended access. These offerings are typically presented in clear, product-like language, making them accessible even to buyers lacking deep technical expertise. Examples of such offerings observed in these forums include:

- "ChatGPT Premium Account – Lifetime Access"

- "Claude Pro – Unlimited Generations"

- "Bundled AI Access – ChatGPT, Midjourney, Perplexity"

- "AI API Keys – Full Access, No Limits"

In many instances, multiple AI services are advertised together as a single, attractive bundle. Promotional language such as "premium access," "no limits," or "full API access" is commonly employed. While the veracity of these claims cannot always be confirmed, they are clearly designed to attract buyers seeking fewer restrictions, enhanced capabilities, or greater flexibility than what official subscription plans provide. This trend significantly lowers the barrier to entry for a broader spectrum of malicious actors, enabling individuals without advanced technical skills to leverage sophisticated AI for nefarious purposes. As AI services continue their rapid evolution and widespread adoption, their perceived value and liquidity within underground markets are poised for further escalation.

Mitigation Strategies for Organizations

Addressing this evolving threat landscape necessitates a multi-faceted approach, combining robust technical controls with comprehensive organizational policies and continuous vigilance. Organizations must prioritize the security of their AI integrations to prevent them from becoming conduits for illicit activities.

- Enforce Multi-Factor Authentication (MFA): Implementing mandatory MFA for all AI platform accounts, especially those accessing sensitive data, adds a critical layer of security beyond just passwords. This significantly complicates unauthorized access even if credentials are stolen.

- Regular Monitoring for Account Compromise: Organizations must proactively monitor their internal systems and external threat intelligence feeds for any indicators of compromise related to AI accounts. This includes monitoring for unusual login patterns, suspicious activity within AI platforms, or the appearance of corporate AI account credentials on dark web forums.

- Comprehensive Employee Training and Awareness: Educate employees on the risks associated with AI tool usage, including phishing attempts targeting AI accounts, the dangers of sharing sensitive information with public AI models, and the importance of adhering to corporate AI usage policies.

- Implement Granular Access Controls: Adopt a principle of least privilege for AI platform access. Ensure that employees only have the necessary permissions and access levels required for their specific roles, minimizing the potential impact of a compromised account.

- Establish and Enforce AI Usage Policies: Develop clear, stringent policies governing the use of AI tools within the organization. These policies should cover data privacy, acceptable use, data input restrictions (e.g., no confidential data in public AI models), and compliance with regulatory requirements.

- Conduct Regular Security Audits and Assessments: Periodically audit the security configurations of AI platforms used by the organization and assess potential vulnerabilities in their integration with internal systems. This includes reviewing API keys, access tokens, and data handling practices.

- Leverage Threat Intelligence: Subscribe to and actively utilize threat intelligence services that monitor underground markets for compromised credentials and discussions related to AI account trading. Proactive intelligence gathering can provide early warnings of potential threats.

- Secure API Integrations: For organizations developing their own AI integrations or using AI APIs, ensure robust API security measures, including strong authentication, rate limiting, and continuous monitoring for anomalous usage patterns.

Future Outlook

The trajectory of AI adoption suggests an increasing sophistication in both its legitimate applications and its malicious exploitation. The underground market for AI accounts is likely to grow in size and complexity, offering an even broader array of illicit services. This will fuel a continuous cat-and-mouse game between AI platform providers and security researchers on one side, and cybercriminals on the other. Organizations must remain agile, adapting their security posture to counter the evolving threats posed by AI-powered criminal enterprises. The future of cybersecurity will inevitably involve understanding and defending against AI-enabled adversaries, making robust AI governance and security paramount.