The global cybersecurity landscape is undergoing a profound transformation as malicious actors increasingly leverage sophisticated artificial intelligence capabilities across the full spectrum of their illicit digital operations. This strategic adoption of AI tools is demonstrably accelerating attack timelines, amplifying the scale of malicious campaigns, and significantly lowering the technical proficiency required for executing complex cyberattacks.

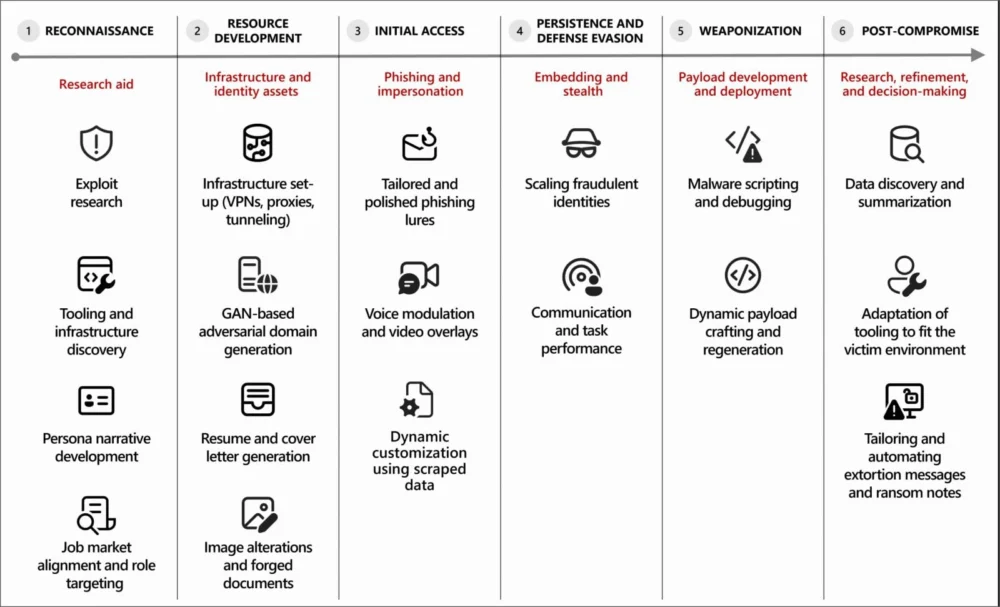

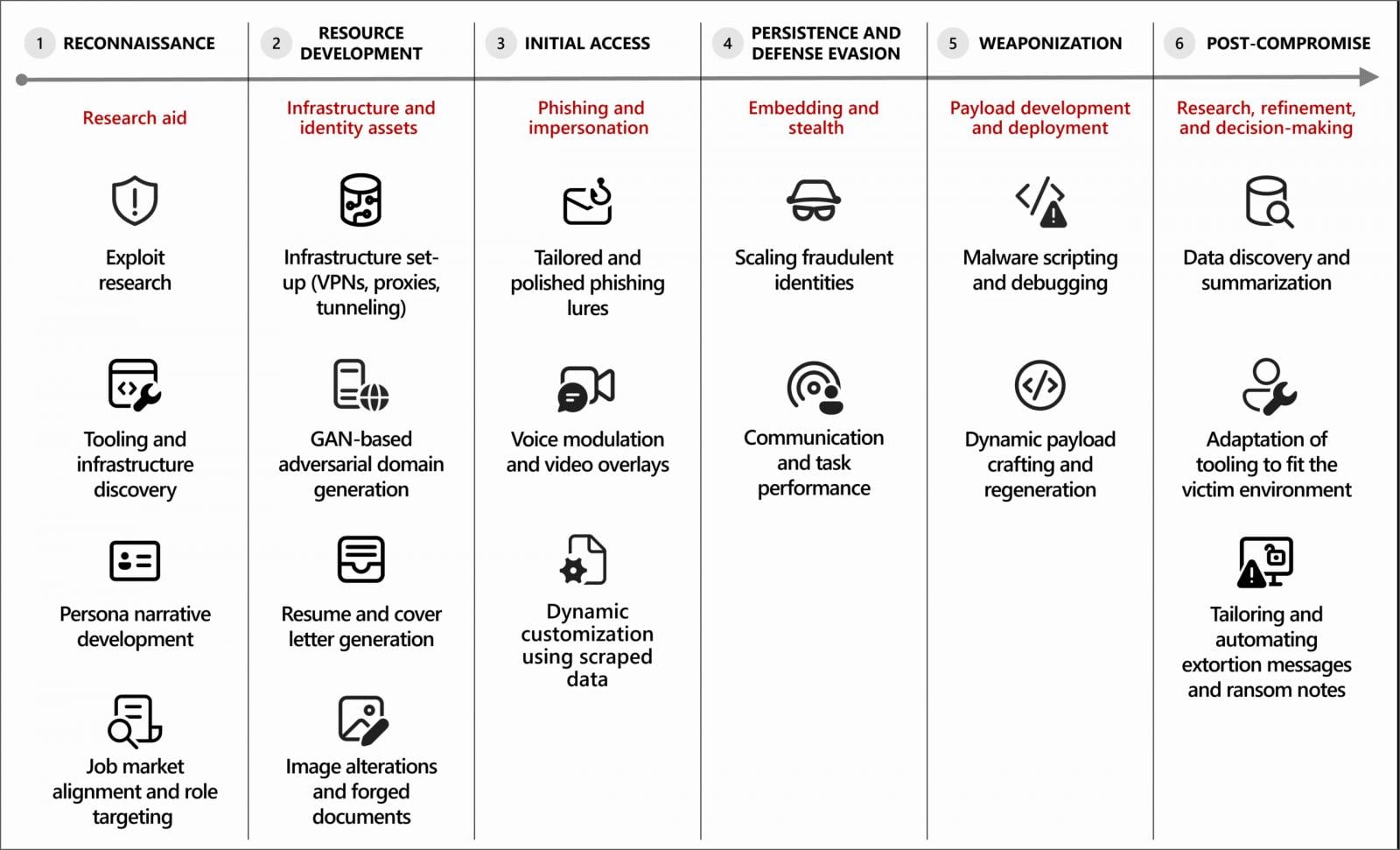

The integration of artificial intelligence, particularly generative AI models, marks a pivotal shift in the operational methodologies of threat actors. These advanced computational systems are now being deployed for a diverse array of tasks, spanning initial reconnaissance, the crafting of highly convincing phishing campaigns, the development of robust attack infrastructure, the creation and refinement of malware, and the execution of post-compromise activities within compromised networks. This algorithmic assistance serves as a powerful force multiplier, streamlining tedious processes, mitigating technical friction, and dramatically accelerating the pace of malicious execution, all while human operators maintain strategic oversight regarding objectives, targeting, and deployment decisions.

AI as a Force Multiplier: Reshaping the Attack Lifecycle

The core utility of AI in offensive cyber operations lies in its capacity to automate, personalize, and optimize various stages of the attack lifecycle. Previously, many of these tasks demanded significant human expertise, time, and resources. AI effectively democratizes advanced attack capabilities, making sophisticated techniques accessible to a broader range of actors, including those with less specialized technical skills. This reduction in the barrier to entry poses a significant challenge for defenders, as the volume and sophistication of threats are likely to increase.

One of the most immediate impacts is the enhancement of efficiency and scalability. AI can rapidly process vast amounts of data, generate numerous attack variations, and manage multiple parallel operations, far exceeding human capabilities. This allows threat actors to conduct more widespread campaigns, target a larger number of victims, and probe for vulnerabilities at an unprecedented rate. Furthermore, AI contributes to enhanced sophistication by enabling the creation of hyper-realistic content, from meticulously crafted spear-phishing emails to dynamically adapting malware, making detection significantly more challenging.

Deepening the Arsenal: AI Across Specific Attack Vectors

The strategic application of AI permeates numerous critical phases of a cyberattack:

- Reconnaissance and Intelligence Gathering: Beyond merely summarizing stolen data, AI algorithms can analyze colossal datasets derived from open-source intelligence (OSINT), leaked databases, and social media. This analytical power allows threat actors to identify critical vulnerabilities, pinpoint key personnel within target organizations, map network topologies, and even predict optimal attack vectors. AI can cross-reference disparate pieces of information to construct comprehensive profiles of targets, highlighting potential weaknesses or social engineering opportunities that might otherwise go unnoticed.

- Phishing and Social Engineering: Generative AI excels at producing highly personalized, contextually relevant, and linguistically flawless content. This capability is revolutionizing phishing campaigns, enabling the creation of extremely convincing lures tailored to individual targets, mimicking trusted contacts, or exploiting current events. The ability to translate content seamlessly into multiple languages further expands the global reach of these campaigns. Beyond text, advancements in deepfake technology, often underpinned by AI, introduce the potential for highly deceptive voice and video impersonations, elevating the sophistication of social engineering to unprecedented levels. The goal is to maximize believability and reduce suspicion, making it harder for recipients to discern fraudulent communications.

- Malware Development and Obfuscation: AI coding tools are proving invaluable in the creation and refinement of malicious software. Threat actors are utilizing these platforms to generate novel malware variants, troubleshoot errors in their code, and port existing malware components to different programming languages to evade signature-based detections. Early experiments even indicate the emergence of AI-enabled malware capable of dynamically generating scripts or modifying its behavior at runtime, making it highly polymorphic and resilient to conventional defensive mechanisms. This adaptive nature represents a significant leap from static malware, posing a formidable challenge for endpoint detection and response systems.

- Infrastructure Development and Operational Security: The rapid provisioning and configuration of command-and-control (C2) infrastructure is another area where AI offers substantial advantages. Threat actors are employing AI to quickly generate credible-looking fake company websites, set up proxy networks, and test the resilience and stealth of their deployments. By automating these setup processes, AI reduces the human footprint in infrastructure management, making it harder for defenders to track and disrupt C2 operations. This automation also allows for more agile adaptation to defensive countermeasures, ensuring persistent access.

- Post-Compromise Activities: Once initial access is gained, AI can assist in automating subsequent steps such as data exfiltration, lateral movement within a network, and privilege escalation. AI models can analyze network configurations and user permissions to suggest optimal pathways for expanding control or locating valuable data, making the post-exploitation phase more efficient and less prone to detection.

Real-World Manifestations: Case Studies in AI Abuse

Observations from leading cybersecurity firms highlight tangible instances of AI being weaponized. For example, specific North Korean state-sponsored threat groups, identified as Jasper Sleet and Coral Sleet, have incorporated AI into elaborate remote IT worker schemes. These operations leverage AI tools to fabricate realistic digital identities, craft convincing resumes, and generate authentic-sounding communications, all designed to secure employment within Western companies. Once embedded, these operatives can maintain persistent access and exfiltrate sensitive information, essentially turning an external cyber threat into an insider risk.

Jasper Sleet actors have explicitly utilized generative AI platforms to streamline the development of fraudulent personas. This includes prompting AI to generate culturally appropriate name lists and email address formats tailored to specific identity profiles. Furthermore, these actors instruct AI tools to review job postings for software development and IT-related roles on professional platforms, extracting and summarizing required skills. This intelligence is then used to meticulously tailor fake identities and qualifications to perfectly match the demands of target roles, increasing the likelihood of successful infiltration. Coral Sleet, another state-aligned group, has similarly been observed using AI to rapidly generate fake company websites, provision essential infrastructure, and conduct testing and troubleshooting of their deployments, demonstrating a comprehensive integration of AI into their operational workflow.

A notable challenge for AI developers is the phenomenon of "jailbreaking" large language models (LLMs). When AI safeguards are implemented to prevent the generation of malicious code or content, threat actors actively employ techniques to circumvent these restrictions, effectively tricking LLMs into producing the desired harmful output. This ongoing cat-and-mouse game between AI safety measures and adversarial ingenuity underscores the inherent difficulties in controlling the malicious potential of rapidly evolving AI technologies.

Beyond generative capabilities, researchers are also documenting initial experimentation with agentic AI, where systems are designed to perform tasks autonomously and adapt their actions based on real-time feedback. While the current primary use of AI remains in augmenting human decision-making rather than fully autonomous attacks, the potential for agentic AI to execute multi-stage attacks and adapt without direct human intervention represents a significant future concern.

Defensive Imperatives: Adapting to an AI-Augmented Threat Landscape

The rise of AI-powered cyberattacks necessitates a corresponding evolution in defensive strategies. Organizations must adopt an adaptive and proactive posture to effectively counter these advanced threats.

- AI-Powered Defenses: To combat AI-powered attacks, the cybersecurity community must increasingly leverage AI and machine learning in defensive applications. This includes advanced anomaly detection, behavioral analytics, predictive threat intelligence, and automated incident response systems capable of identifying and mitigating AI-generated threats in real-time.

- Strengthening Identity and Access Management (IAM): Given the prevalence of AI in crafting social engineering campaigns and supporting insider threat scenarios, robust IAM practices are paramount. This entails multi-factor authentication (MFA) across all critical systems, continuous monitoring of credential usage for abnormal patterns, and stringent access controls based on the principle of least privilege. Organizations must treat schemes involving fraudulent remote IT workers with the same vigilance as traditional insider risks.

- Securing AI Systems: As AI becomes more integral to both offensive and defensive operations, the AI models themselves become potential targets. Protecting AI systems from adversarial attacks—such as data poisoning, model inversion, or prompt injection—is crucial. Ensuring the integrity and confidentiality of AI models and their training data is a burgeoning area of cybersecurity.

- Human Vigilance and Training: While AI automates, the human element remains irreplaceable. Comprehensive cybersecurity awareness training for employees, emphasizing the evolving nature of social engineering tactics and the realistic appearance of AI-generated content, is essential. Fostering a culture of skepticism and critical thinking regarding digital interactions is a foundational defense.

- Collaborative Threat Intelligence: The rapid evolution of AI-driven threats necessitates unprecedented levels of collaboration and threat intelligence sharing across industries, governments, and international bodies. Collective insights into new attack methodologies, jailbreaking techniques, and observed threat actor behaviors are vital for staying ahead of the curve.

The Future Outlook: An Escalating AI Arms Race

The observations from major technology and security entities, including Google and Amazon, corroborate the findings regarding the pervasive abuse of AI across all stages of cyberattacks. The incident involving an AI-assisted hacker breaching over 600 FortiGate firewalls in a mere five weeks underscores the efficiency and scale that AI can bring to offensive operations.

The long-term implications of this trend are profound. We are witnessing the nascent stages of an AI arms race in cyberspace, where offensive and defensive capabilities are continually pushed forward by algorithmic innovation. This trajectory raises significant ethical and regulatory questions regarding the responsible development and deployment of AI. The potential for fully autonomous AI-driven cyber warfare, where systems operate with minimal human oversight, presents a future scenario that demands proactive consideration and robust international dialogue.

The strategic integration of AI into cyberattacks is not merely an incremental improvement; it represents a fundamental paradigm shift. The cybersecurity community must respond with equal innovation, vigilance, and collaborative spirit to navigate this new era of algorithmic malice effectively. The future of digital security will hinge on our collective ability to harness AI for defense, understand its misuse, and adapt our strategies at the speed of artificial intelligence itself.