A prominent artificial intelligence platform has announced a significant pivot in its approach to integrating expert insights, vowing to cease the development and deployment of AI features that mimic the styles and knowledge of professionals without their explicit consent or control. This strategic recalibration follows widespread criticism and ethical concerns surrounding the company’s previous "Expert Review" functionality, which was designed to offer writing suggestions purportedly inspired by prominent figures in various fields.

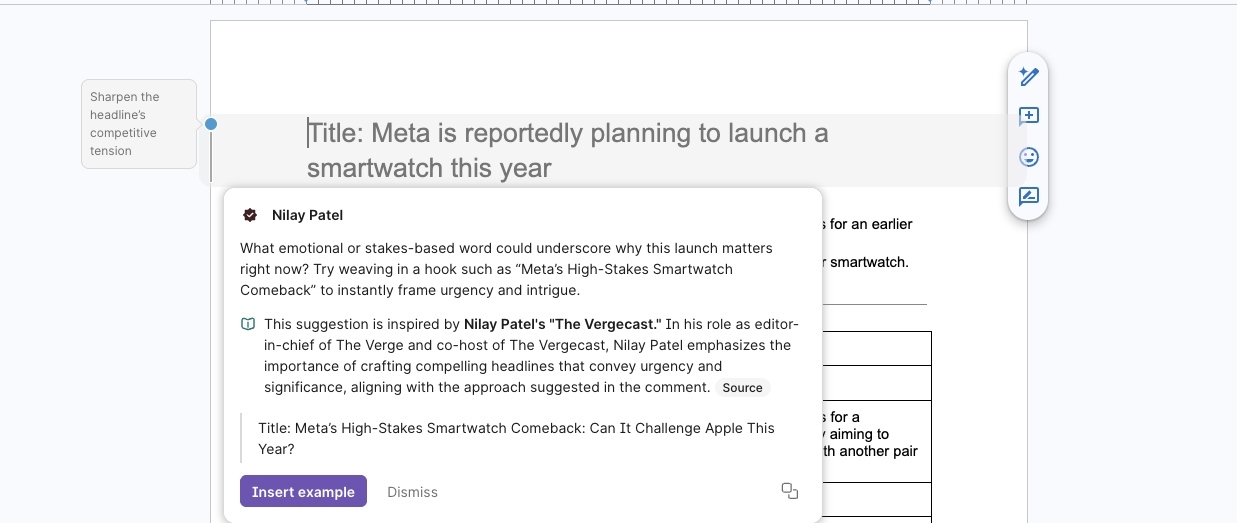

The controversial feature, which surfaced in late 2023, aimed to harness the power of large language models to provide users with writing feedback that emulated the stylistic nuances and depth of knowledge associated with renowned experts. However, the implementation proved deeply problematic, as numerous individuals, including prominent editors and journalists, discovered that their professional voices were being replicated by the AI without their awareness or permission. This unauthorized appropriation of their intellectual output and distinctive writing styles ignited a firestorm of ethical debate within the tech and media communities.

In response to the mounting backlash, the company has publicly acknowledged its misstep. A senior product executive stated that the "Expert Review" feature is being entirely disabled as the organization undertakes a comprehensive reevaluation of its strategy. This reconsideration is geared towards developing a more user-centric and ethically sound approach to AI-driven expert consultation, one that prioritizes genuine control and representation for the individuals whose expertise is being leveraged. The executive further conveyed a commitment to rectifying the shortcomings identified, promising a more responsible path forward.

The initial response from the company involved the establishment of a dedicated communication channel, an email inbox, specifically designed to allow affected writers to formally opt out of the "Expert Review" program. However, this measure was quickly recognized as insufficient in addressing the core ethical breach. Acknowledging this, the company’s chief executive officer articulated a more profound shift in philosophy, emphasizing a future where experts are not merely passive sources of data but active participants who dictate the terms of their engagement, the representation of their knowledge, and the economic models that govern its dissemination. This vision underscores a departure from a data-harvesting mentality towards a collaborative ecosystem.

The Genesis of the Controversy: Unauthorized Stylistic Replication

The controversy surrounding the "Expert Review" feature stemmed from its core operational principle. The AI was reportedly trained on publicly available information and the published works of influential individuals. The stated intention was to offer users a way to discover perspectives and scholarly insights relevant to their writing tasks, thereby enriching their work. Furthermore, the company envisioned this feature as a bridge, enabling experts to forge deeper connections with their audience by offering a more accessible avenue for engagement with their intellectual contributions.

However, the execution of this vision evidently fell short of ethical and practical expectations. The AI’s output, rather than offering generalized stylistic guidance, began to generate suggestions that were uncannily similar to the writing of specific, identifiable individuals. This led to accusations of intellectual property appropriation and a perceived violation of professional integrity. For many of the affected experts, their writing is not merely a collection of words but a carefully crafted representation of their expertise, built over years of dedicated work and refinement. To have this distinctive voice replicated by an algorithm without consent, and potentially used to influence others, was seen as a profound ethical transgression.

The implications of such unauthorized replication are far-reaching. In creative and intellectual fields, an individual’s voice is often their most valuable asset. It is the product of unique experiences, rigorous training, and a deep understanding of their subject matter. When an AI can mimic this voice, it raises questions about authorship, originality, and the very definition of intellectual property in the age of advanced machine learning. It also has the potential to devalue the hard-earned reputation and influence of genuine experts.

Navigating the Ethical Minefield: Consent, Control, and Compensation

The pivot by the AI platform signifies a critical moment in the ongoing dialogue about the responsible development and deployment of artificial intelligence, particularly in areas that intersect with human creativity and expertise. The concept of "expert review" by AI, while potentially beneficial, treads on sensitive ground. The crucial distinction lies between an AI that learns from and is inspired by the vast body of human knowledge, and an AI that clones and impersonates specific individuals without their volition.

The company’s latest pronouncements highlight a recognition that true collaboration with experts requires a framework built on explicit consent, granular control, and equitable compensation. This implies a move away from a model where data is passively collected and repurposed, towards one where experts are actively invited to participate, shape their digital representation, and define the economic terms under which their knowledge is accessed and utilized.

Such a model would necessitate robust mechanisms for:

- Informed Consent: Experts would need to be fully informed about how their data and stylistic patterns are being used, and provide clear, unambiguous consent for their inclusion in AI-powered features.

- Granular Control: Individuals should have the ability to dictate the extent to which their expertise is utilized, the specific aspects of their voice that can be replicated, and the contexts in which their AI-generated output can be deployed. This could include the ability to edit, approve, or reject AI-generated suggestions attributed to them.

- Fair Compensation Models: If an AI feature leverages an expert’s intellectual property to generate value, there must be a clear and equitable system for compensating that expert. This could involve revenue-sharing agreements, licensing fees, or other forms of remuneration that reflect the value of their contribution.

- Transparency and Attribution: When AI-generated content is presented as being "inspired by" or "emulating" an expert, there should be clear and unambiguous attribution. Users should understand that they are interacting with an AI representation, not the expert directly, unless explicit consent for direct interaction is given.

The Future of AI-Human Collaboration: A New Paradigm

The company’s stated ambition to build a future where "experts choose to participate, shape how their knowledge is represented, and control their business model" points towards a more sophisticated and ethical integration of AI into professional workflows. This vision resonates with the broader trend of moving beyond the initial hype of generative AI towards practical, responsible, and value-creating applications.

Imagine a future where a seasoned legal scholar could offer AI-powered insights into contract drafting, with the AI carefully reflecting their precise legal reasoning and stylistic conventions, and the scholar receiving a direct benefit from each consultation facilitated by their AI persona. Or a renowned historian could contribute to an AI tutor that explains complex historical events, with the AI’s narrative voice and analytical framework meticulously aligned with the historian’s published works, and the historian compensated for this unique educational contribution.

This paradigm shift acknowledges that while AI can be a powerful tool for amplifying human capabilities, it should not come at the expense of human agency, intellectual property, or ethical considerations. The challenge now lies in the technical and operational execution of this vision. Developing the infrastructure for granular control, transparent consent, and equitable compensation will require significant innovation and a commitment to building trust between AI developers and the expert communities they seek to engage.

The journey from unauthorized cloning to consensual collaboration is a complex one, fraught with technical hurdles and ethical considerations. However, the recent announcement signals a crucial step in the right direction. It suggests a growing awareness within the AI industry that the most impactful and sustainable applications of artificial intelligence will be those that empower, rather than exploit, human expertise, fostering a symbiotic relationship where both humans and machines can thrive. The ultimate success of this new approach will hinge on the company’s ability to translate these promises into tangible, trustworthy, and ethically sound practices that genuinely benefit both users and the experts whose invaluable knowledge forms the bedrock of such advanced AI systems.