The digital landscape is facing an unprecedented deluge of synthetic content, raising critical questions about authenticity and trust. Despite pronouncements from industry leaders about the need to combat AI-generated "slop," the very platforms that champion these ideals are simultaneously accelerating the development and proliferation of generative AI tools, creating a profound and unsettling paradox.

At the close of 2025, a notable figure within the social media ecosystem expressed profound concerns regarding the escalating challenge of artificial intelligence in eroding digital authenticity. This executive articulated a vision where the very essence of human creativity and connection—the ability to be genuine, to foster meaningful interactions, and to possess a unique, unreplicable voice—is being commodified and replicated by sophisticated AI tools. The proposed remedy centered on the establishment of robust systems for content authentication, akin to cryptographic signatures from camera manufacturers that would create an irrefutable chain of custody for media. This would, in theory, allow for the definitive identification of non-AI-generated content.

However, the technological underpinnings of such a solution, specifically the Content Authenticity Initiative (C2PA) and its associated Content Credentials, are already in play. Despite their existence and adoption by major technology firms, their implementation appears to be falling short of effectively mitigating the influx of AI-generated material. Instead, these initiatives are increasingly perceived as superficial gestures, a smokescreen that allows platforms to continue their aggressive push into generative AI development. This dichotomy is particularly evident as these same platforms invest heavily in creating advanced AI video editing tools and other generative capabilities.

The increasing sophistication of AI in mimicking reality poses a significant threat to the established cultural and economic frameworks of social media platforms. These platforms have long cultivated ecosystems built around the unique contributions of human creators. AI can now effortlessly replicate popular trends, generate photorealistic images of non-existent individuals, and produce a constant stream of visually homogenous content that already saturates social media feeds. While creators are attempting to differentiate themselves by embracing raw and imperfect aesthetics, AI is proving adept at mimicking these styles as well. More alarmingly, AI’s capacity for rapid content generation is being exploited to disseminate misinformation on critical societal issues. Examples include the weaponization of AI to spread false narratives surrounding protests or to generate fabricated accounts of sensitive events, blurring the lines between verifiable reality and sophisticated digital fabrication.

In recent years, a number of prominent technology companies have publicly aligned themselves with provenance-based standards, such as the C2PA, as a nominal means of combating the proliferation of synthetic media. The C2PA, an initiative founded by industry leaders including Adobe, Intel, Microsoft, ARM, Truepic, and the BBC, aims to establish a verifiable history for digital content. As articulated by the aforementioned executive, the C2PA’s approach is not to directly label AI-generated content as false, but rather to authenticate content that originates from human creation. This is achieved by embedding metadata at the point of capture or during the editing process. This metadata provides a verifiable record of who created the content, when, and under what conditions, including whether AI was utilized in its creation. The inclusion of Meta as a steering committee member in September 2024, with the stated goal of fostering a healthier digital ecosystem, underscores the perceived importance of such standards.

Despite broad industry endorsement, including participation from giants like Microsoft, Meta, Google, OpenAI, TikTok, and Qualcomm, the C2PA represents just one facet of a multi-pronged approach to content verification. Critically, its current implementation is demonstrably insufficient to shield users from the pervasive issue of AI-generated "slop" and deceptive deepfakes. The onus of identifying and verifying content often falls upon individual users, many of whom remain unaware of the existence or function of such authentication systems. This places an untenable burden on the public, while AI developers appear to leverage these standards as a means of distancing themselves from the problem they are actively exacerbating.

The embrace of C2PA and similar provenance-based systems, such as Google’s SynthID watermarking, by major technology corporations highlights a strategic, albeit potentially inadequate, response to the AI-generated content challenge. While these methods offer a degree of traceability, provenance-based solutions are inherently limited. Their effectiveness hinges on universal adoption across the entire media creation and distribution pipeline—a scenario that is logistically improbable. The gradual integration of C2PA by camera manufacturers, such as Canon, Nikon, Sony, FujiFilm, and Leica, primarily through support for new model releases, illustrates the slow and incomplete nature of this adoption. As noted by representatives from Leica Camera USA, older equipment, which constitutes a vast majority of existing devices, will continue to produce content reliant on traditional verification methods like context, reputation, and editorial oversight.

Furthermore, the inherent fragility of provenance metadata presents a significant vulnerability. OpenAI, a key participant in the C2PA initiative, acknowledges that such metadata can be "easily removed either accidentally or intentionally." Platforms like LinkedIn and TikTok have demonstrated a persistent inability to reliably tag content that should, by design, carry C2PA metadata. While YouTube employs a combination of C2PA, Google’s SynthID, and other systems for AI content labeling, these efforts are often inconsistent and difficult for users to discern. The very definition of "real" versus "fake" in the context of digital media is a complex and evolving challenge. Meta’s previous missteps, such as incorrectly applying "Made by AI" labels to authentic photographs on Instagram, alienated a significant portion of the photography community and underscored the difficulties in accurately categorizing digital content.

In response to these challenges, Meta has since rebranded its labels to "AI info," making them considerably less conspicuous. These labels, often presented in minuscule text beneath an account name on the Instagram app, are intermittently obscured by other post details like song titles. Accessing the full "AI info" requires users to navigate through a three-dot menu. The desktop version of Instagram frequently omits these labels entirely, even when present on the mobile application. In the absence of any visual indicators or labels, users are expected to manually verify suspicious content through browser extensions or by uploading it to official C2PA verification websites, a process that is both cumbersome and largely unknown to the average user.

The limitations of C2PA as a comprehensive solution for AI content detection have been extensively documented. While its adoption is expanding and any system, however imperfect, is preferable to none, it was not fundamentally designed to be a universal deepfake detection or AI "slop" mitigation tool. As acknowledged by industry experts, C2PA addresses a specific set of problems rather than offering an all-encompassing solution. The conspicuous absence of X (formerly Twitter) from the C2PA initiative further highlights the fragmented and incomplete nature of industry-wide efforts. Despite being a founding member, X withdrew following its acquisition, leaving a significant platform where news disseminates rapidly and influential figures engage with their audiences, without any commitment to content provenance. This absence is particularly concerning given reports of Grok, X’s AI chatbot, generating problematic content and Elon Musk himself sharing misleading deepfakes. This leaves X’s substantial user base exposed to unverified and potentially fabricated information.

The CEO of Reality Defender, Ben Colman, posits that the unchecked proliferation of AI-generated content would not be occurring if C2PA alone were a viable solution. He argues that an exclusive reliance on labeling and watermarking systems presumes that malicious AI content is exclusively produced by a limited set of tools, a fundamentally flawed assumption that underpins the moderation strategies of major social platforms. Furthermore, recent academic research suggests that even effective transparency warnings may prove insufficient in mitigating the harm caused by AI-generated deepfakes, citing a lack of empirical evidence supporting the efficacy of AI transparency measures.

Despite these shortcomings, the prevailing narrative within the industry persists: that standards like C2PA represent a crucial step in the ongoing development of authenticity and deepfake detection systems, and that these systems are still in a developmental phase. Industry representatives acknowledge the potential frustration with the pace of progress but maintain that enhanced visibility of C2PA across online platforms is forthcoming, albeit at a slower rate than desired.

The inherent conflict of interest is stark: if AI providers such as Meta and Google were genuinely committed to protecting users from deception, they would temper the release and advancement of generative AI tools that inherently contribute to the problem, pending the development of viable solutions. Instagram’s embrace of an AI-centric alternative, and OpenAI’s launch of a TikTok clone featuring AI-generated videos that infringed on copyrights and impersonated individuals, directly contradicts any purported commitment to preserving digital authenticity. While platforms like YouTube publicly pledge to combat AI-generated "slop," they simultaneously encourage creators to utilize their parent company’s AI models for video production, creating a paradoxical incentive structure.

This dynamic reveals a calculated strategy by AI providers involved in C2PA: they aim to benefit from the advancement of AI technology while simultaneously attempting to abdicate responsibility for controlling the potential misuse of their creations. Revenue streams from subscriptions for enhanced image and video generation capabilities, the significant presence of AI-generated content on platforms like YouTube, and the impending introduction of premium AI features by Meta all point to a business model that thrives on the very content it purports to regulate. As articulated by industry observers, the inherent engagement-driving nature of inflammatory or controversial content, including AI-generated material, incentivizes platforms to maintain user attention, thereby maximizing advertising revenue.

Beyond the distribution of harmful misinformation, generative AI also contributes to a general degradation of the online experience. The proliferation of bizarre and attention-grabbing AI-generated imagery, such as the viral "shrimp Jesus" phenomenon, exemplifies how AI can flood digital spaces with content that, while not overtly harmful, serves to dilute genuine human expression and necessitates increased user effort in filtering and discerning valuable content. The reduction in skill and time barriers for visual content creation further exacerbates this deluge, creating a competitive landscape where authentic media struggles to capture attention amidst an overwhelming tide of synthetic material.

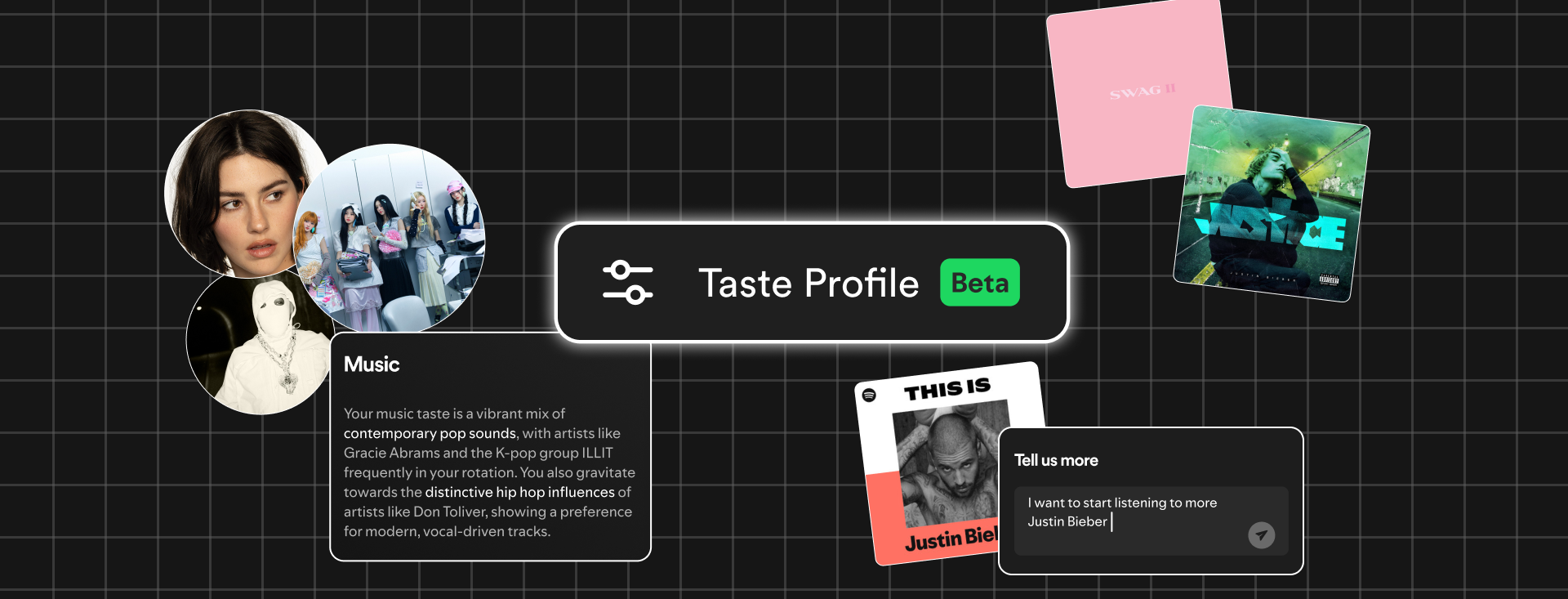

Ultimately, the pursuit of authenticating online content appears to be an uphill battle. While progress is being made, C2PA operates as a voluntary framework, an honor system that was never realistically positioned to serve as an ultimate deepfake solution. Emerging strategies are now exploring the analysis of creators themselves, rather than solely focusing on the content they produce. Instagram, for instance, is shifting its focus to "who says something, instead of what is being said," aiming to elevate the credibility of human voices. YouTube’s moderation approach following significant tragic events, prioritizing content with public interest value and directing users towards official news sources, represents a partial implementation of this creator-centric strategy. However, this is juxtaposed with Google’s increasing reliance on AI-generated summaries for news headlines, often introducing inaccuracies and further complicating the pursuit of reliable information.

Any initiative that effectively prevents synthetic materials from being mistaken for human-made creations directly conflicts with the financial interests of companies heavily invested in AI development. The inherent conflict of interest raises serious questions about the extent to which these entities can genuinely prioritize the integrity of digital information. The assertion that AI has effectively "won the war on reality" reflects a growing sentiment that the proliferation of synthetic content has fundamentally altered the digital landscape, making it increasingly challenging for genuine human expression to stand out amidst an environment of "infinite abundance and infinite doubt." The notion that navigating this complex environment is as simple as being "real, transparent, and consistent" overlooks the profound technological and systemic challenges that have rendered basic verification mechanisms like community notes and "I am not a robot" checks insufficient.