Recent intelligence reports reveal a significant escalation in cyber warfare, with sophisticated threat actors, including state-sponsored entities, actively integrating advanced generative artificial intelligence models into their operational frameworks, leveraging these powerful tools to enhance every phase of their campaigns, from initial reconnaissance to post-compromise actions. The advent of large language models (LLMs) has introduced a new dynamic into the digital threat landscape, providing malicious actors with unprecedented capabilities to automate, refine, and scale their illicit activities, fundamentally altering the calculus of cyber defense and offense.

The strategic pivot by prominent nation-state hacking groups towards commercial AI platforms marks a pivotal moment in cybersecurity. These sophisticated adversaries, linked to geopolitical rivals such as China, Iran, North Korea, and Russia, are not merely experimenting with AI; they are embedding it deeply into their established methodologies. Their deployment of models like Google’s Gemini extends across the entire attack continuum, encompassing initial target identification, the meticulous crafting of deceptive social engineering schemes, the development and refinement of malicious code, the rigorous testing of discovered vulnerabilities, and intricate post-exploitation maneuvers. This integration signifies a maturity in AI adoption by hostile actors, moving beyond theoretical applications to practical, operational enhancements.

The AI-Augmented Attack Lifecycle

The comprehensive utilization of generative AI by threat actors is evident across multiple stages of a cyber intrusion.

1. Advanced Reconnaissance and Open-Source Intelligence (OSINT):

In the preliminary phase of an attack, LLMs are proving invaluable for advanced reconnaissance. Threat actors employ these models for sophisticated target profiling, enabling them to synthesize vast quantities of publicly available information with unprecedented speed and accuracy. This includes identifying key personnel, mapping organizational structures, understanding technological stacks, and even predicting potential vulnerabilities based on industry-specific risk profiles. By feeding an LLM with disparate data points, adversaries can rapidly generate comprehensive intelligence dossiers, revealing crucial insights that might otherwise require extensive manual effort. The ability to process and correlate seemingly unrelated data points allows for the creation of highly detailed and actionable intelligence pictures, streamlining the initial stages of target selection and vulnerability assessment.

2. Sophisticated Social Engineering and Phishing Campaigns:

Perhaps one of the most immediate and impactful applications of generative AI lies in the realm of social engineering. Beyond simply generating generic phishing lures, LLMs are capable of crafting highly personalized, contextually relevant, and grammatically impeccable messages that significantly increase the likelihood of success. Actors from China (e.g., APT31, Temp.HEX) have been observed using Gemini for this purpose, producing compelling narratives tailored to specific targets. These AI-driven lures can mimic legitimate communications, incorporating industry jargon, organizational specifics, and even individual communication styles, making them exceedingly difficult for human recipients to discern as malicious. The LLM’s capacity for rapid iteration and A/B testing of various message constructs further refines these campaigns, leading to more effective and insidious attempts to compromise credentials or implant malware.

Iranian adversary APT42, for instance, has leveraged LLMs to bolster their social engineering campaigns. The psychological engineering capabilities of AI can generate dynamic and adaptive conversational flows, leading targets through a sequence of interactions designed to extract information or induce specific actions. Furthermore, cybercriminals are mirroring this trend, employing generative AI services in what are termed "ClickFix campaigns." These sophisticated schemes involve malicious advertisements placed prominently in search engine results for common troubleshooting queries. Unsuspecting users, seeking solutions to technical problems, are lured into executing malicious commands, frequently resulting in the deployment of information-stealing malware, such as the AMOS variant targeting macOS systems. The effectiveness of these campaigns stems from their ability to exploit user trust in search engines and the perception of immediate utility provided by the AI-generated instructions.

3. Accelerated Malware Development and Exploitation:

The utility of AI extends profoundly into the technical domain of malware creation and vulnerability exploitation. Threat actors are utilizing LLMs as development platforms to accelerate the creation of tailored malicious tools. This includes debugging existing code, generating new functional modules, and researching novel exploitation techniques. The reduction in the learning curve for less skilled adversaries is particularly concerning, as AI can democratize access to advanced coding capabilities.

- Vulnerability Analysis and Testing: Chinese threat actors have demonstrated the ability to create expert cybersecurity personas, directing AI models to automate vulnerability analysis. In fabricated scenarios, they have instructed Gemini to analyze complex issues such as Remote Code Execution (RCE), Web Application Firewall (WAF) bypass techniques, and SQL injection test results, even specifying US-based targets. This capability allows adversaries to simulate attack scenarios, identify logical flaws in target systems, and refine their attack vectors with greater precision and efficiency.

- Code Generation and Refinement: Another China-based actor was observed frequently employing Gemini to rectify coding errors, conduct extensive technical research, and solicit advice on technical capabilities pertinent to intrusions. This iterative process allows for the rapid development of robust and stealthy malware.

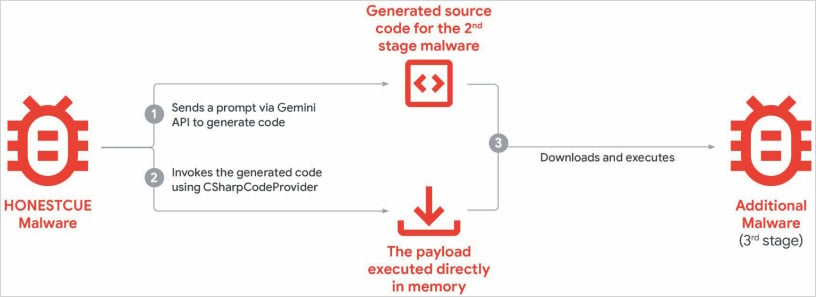

- AI-Enhanced Malware Frameworks: The integration of AI capabilities into existing malware families is also a growing concern. The "HonestCue" malware downloader and launcher, a proof-of-concept observed in late 2025, exemplifies this trend. HonestCue leverages the Gemini API to generate C# code for its second-stage payloads, which are then compiled and executed directly in memory, minimizing forensic artifacts. Similarly, the "CoinBait" phishing kit, a React Single Page Application (SPA) designed for credential harvesting on cryptocurrency exchanges, exhibits strong indicators of AI-assisted development. Artifacts within its source code, such as logging messages prefixed with "Analytics:" and references to the "Lovable AI platform" and "lovable.app," suggest the use of AI code generation tools. These examples underscore the evolving sophistication of malware development facilitated by generative AI, promising more modular, adaptive, and difficult-to-detect malicious software.

4. Command and Control (C2) Development and Post-Compromise Actions:

While less detailed in current observations, the potential for AI to aid in C2 development and post-compromise activities is substantial. LLMs could assist in creating more resilient and dynamic C2 channels, generating varied communication patterns to evade detection. Furthermore, AI could help in automating data exfiltration strategies, identifying critical data points, and even formulating intelligent methods for maintaining persistence within compromised networks, adapting to defensive measures in real-time.

The Emergence of Model Extraction and Knowledge Distillation

Beyond direct operational use in attacks, a more subtle yet commercially significant threat has emerged: AI model extraction and knowledge distillation. This involves organizations, often leveraging authorized API access, systematically querying an advanced AI system like Gemini to reproduce its decision-making processes. The objective is to replicate the model’s functionality, or a substantial part of it, in a separate, often less powerful, or locally controlled model.

This process, known as "knowledge distillation," entails transferring the learned information from a larger, more complex "teacher" model to a smaller, more efficient "student" model. While not posing a direct threat to the data or immediate security of end-users of the original model, this practice constitutes a severe commercial, competitive, and intellectual property challenge for the creators of these advanced AI systems. It allows adversaries or competitors to rapidly accelerate their own AI model development at a significantly lower cost and without investing the extensive resources required for initial research and training.

Google’s Threat Intelligence Group (GTIG) highlights this as a critical concern, flagging these attacks as a form of intellectual theft that is highly scalable and fundamentally undermines the AI-as-a-service business model. A notable large-scale attack against Gemini AI involved approximately 100,000 prompts, posing a diverse array of questions specifically designed to replicate the model’s reasoning across various tasks, particularly in non-English languages. Such an endeavor demonstrates the intent and capability to systematically reverse-engineer and exploit the proprietary knowledge embedded within sophisticated AI architectures.

Google’s Response and Broader Industry Implications

In response to these escalating threats, Google has initiated targeted defensive measures. This includes the disabling of accounts and infrastructure directly implicated in documented abuse and the implementation of enhanced security classifiers within Gemini to impede future malicious exploitation. The company reiterates its commitment to designing AI systems with robust security protocols and stringent safety guardrails, regularly subjecting its models to rigorous testing to continuously improve their resilience and integrity.

The proliferation of AI in cyber operations presents multifaceted challenges for the global cybersecurity community. For AI developers, the imperative to embed "secure by design" principles from the outset of model development becomes paramount. This includes implementing advanced threat detection mechanisms, robust access controls, and continuous monitoring for anomalous usage patterns that could indicate malicious intent or unauthorized knowledge extraction.

For defenders, the rise of AI-powered attacks necessitates a parallel evolution in defensive capabilities. This includes leveraging defensive AI to detect sophisticated, AI-generated threats, automating incident response, and developing proactive threat intelligence capabilities that can anticipate emerging AI-driven attack vectors. The "AI arms race" in cybersecurity is accelerating, with both offensive and defensive applications of AI rapidly advancing.

The implications extend beyond technical countermeasures. Regulatory frameworks may need to evolve to address the ethical and legal complexities of AI misuse, particularly concerning intellectual property rights in the context of model extraction and the potential for AI to amplify the scale and impact of cyber warfare. The skills gap in cybersecurity could also be affected; while AI might lower the technical barrier for some attackers, it simultaneously offers powerful tools for defenders, emphasizing the need for skilled professionals who can effectively wield these advanced technologies.

In conclusion, the integration of generative AI into the arsenal of nation-state hackers and cybercriminals marks a significant inflection point in the cyber threat landscape. While AI offers transformative benefits, its dual-use nature necessitates an intensified focus on security, ethical development, and proactive defense strategies to safeguard digital ecosystems against an increasingly intelligent and adaptive adversary. The ongoing developments underscore the critical need for continuous vigilance, collaborative intelligence sharing, and persistent innovation in cybersecurity research and development.