The seemingly innocuous PDF, a digital format ubiquitous in professional and governmental spheres, presents a surprisingly formidable obstacle for the rapid advancements in artificial intelligence, forcing a reevaluation of AI’s practical reach in processing real-world data.

The inherent complexity of the Portable Document Format (PDF) has emerged as a significant bottleneck in the otherwise meteoric rise of artificial intelligence, revealing a persistent gap between AI’s theoretical capabilities and its practical application in deciphering a format that underpins vast repositories of critical information. While AI has demonstrated remarkable prowess in generating code, solving intricate scientific problems, and even composing creative content, the humble PDF, designed for visual fidelity rather than machine readability, continues to pose a significant hurdle, frustrating attempts to extract and analyze its contents with the speed and accuracy expected from cutting-edge technology. This challenge is not merely academic; it has tangible implications for how governments, legal institutions, and businesses manage and leverage their extensive digital archives.

The genesis of this problem can be traced back to the very design principles of the PDF. Developed by Adobe in the early 1990s, its primary objective was to ensure that documents appeared precisely the same across different operating systems and devices, and crucially, when printed. This focus on visual presentation, while admirable for its intended purpose, resulted in a format that treats text not as sequential, logical data, but as a series of graphical elements with specific positioning instructions. Unlike formats such as HTML, which structure content semantically, PDFs essentially paint an image of a page, making the underlying text difficult for machines to interpret in its intended order.

Optical Character Recognition (OCR) technology, a vital tool in converting image-based text into machine-readable data, often falters when confronted with the intricate layouts common in PDFs. Documents with multiple columns, tables, diagrams, footnotes, and headers present a significant challenge. Standard OCR algorithms, designed to read left-to-right, can easily become disoriented, producing garbled and unintelligible output when encountering complex formatting. Even advanced AI models, when tasked with processing PDFs, frequently resort to summarizing the entire document rather than extracting specific information, misinterpreting footnotes as body text, or worse, fabricating content entirely—a phenomenon known as hallucination. The process of attempting to parse a PDF with general-purpose AI models can be computationally expensive and time-consuming, yielding inconsistent and unreliable results.

This difficulty is compounded by the fact that large language models (LLMs) have historically been trained on relatively clean, text-based datasets, often derived from the internet’s HTML content. PDFs, by contrast, represent a massive, untapped reservoir of high-quality, structured information that has been underrepresented in training corpora. Government reports, academic journals, textbooks, legal filings, and technical manuals—all predominantly distributed as PDFs—contain a wealth of knowledge that AI systems have struggled to access. This scarcity of dedicated PDF training data has meant that even sophisticated AI models often lack the nuanced understanding required to navigate the visual and structural complexities inherent in the format.

The scale of this data challenge became starkly apparent in the wake of high-profile document releases, such as the extensive Epstein estate files. When government bodies, like the Department of Justice, release millions of documents in PDF format, the limitations of existing OCR technology become glaringly obvious. Even when these documents undergo basic OCR processing, the output is often so poorly rendered that it renders the files effectively unsearchable. The absence of intuitive interfaces or robust indexing means that individuals attempting to find specific information within these vast digital archives are left to manually sift through documents, relying on sheer luck and the hope that a particular document ID contains the desired information. This inefficiency severely hinders transparency, accountability, and the ability to conduct thorough investigations or research.

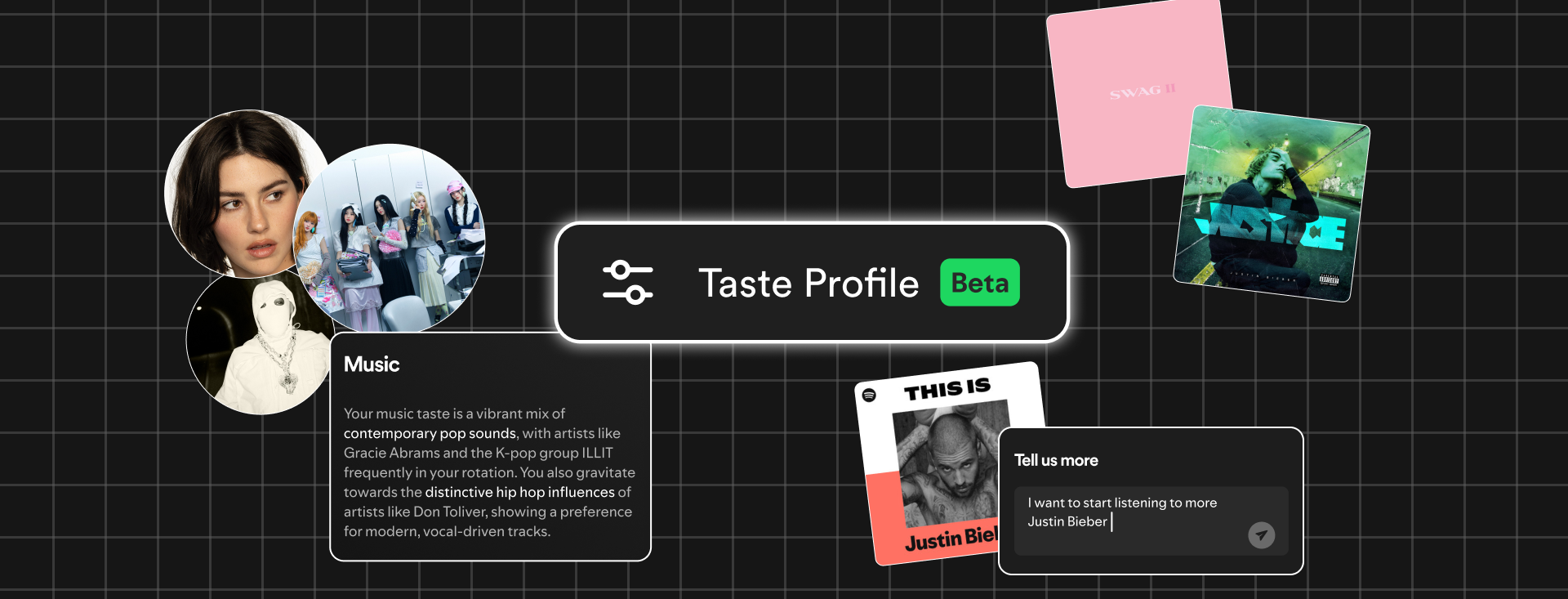

Recognizing this critical gap, a growing number of researchers and technology companies have begun to focus specifically on the challenge of PDF parsing. Edwin Chen, CEO of Surge, an AI company, has categorized PDF processing as one of AI’s "unsexy failures," highlighting its impact on the practical utility of AI in real-world applications. The development of specialized AI models trained on vast collections of PDFs is now seen as a crucial frontier. Researchers at the Allen Institute for AI, for instance, have developed models specifically designed to tackle the intricacies of PDF structure, training them on diverse datasets including public domain books, academic papers, and historical documents. These models are engineered to recognize editorial cues such as headers, footers, and the logical flow of multi-column layouts, significantly improving the accuracy of text extraction.

The PDF format’s resilience in the digital landscape is a testament to its enduring utility. Duff Johnson, CEO of the PDF Association, an organization dedicated to the development of the PDF standard (ISO 32000-2:2020), emphasizes that the format was intentionally designed for permanence and fidelity. Unlike web pages, which can change and render differently based on the browser, or editable documents that can be altered without a trace, PDFs offer a stable, unalterable representation of a document. This characteristic is indispensable for sectors where legal, financial, or scientific accuracy is paramount, including engineering, law, and government. The ability to reliably access historical documents, some dating back to the mid-1990s, without concern for format degradation or alteration underscores the format’s fundamental value.

The evolution of AI’s approach to PDF parsing is moving towards a more sophisticated, multi-stage process. Instead of relying on a single, monolithic model, current efforts often involve a pipeline of specialized AI agents. This mirrors techniques developed for complex domains like self-driving vehicles, where different AI modules are responsible for identifying and interpreting various elements of the environment. In the context of PDFs, an initial "segmentation" model might identify distinct components of a page, such as headers, tables, footnotes, or image captions. These identified components are then passed to further specialized models, each trained to perform specific tasks—for example, one model might be adept at parsing the structure of tables, while another is designed to interpret charts and graphs. A final vision-language model can then review and refine the extracted information, correcting errors and ensuring a higher degree of accuracy.

This modular approach has proven particularly effective in transforming complex visual data within PDFs into usable formats. For instance, charts and graphs can be converted into structured spreadsheet data, a capability highly sought after by financial institutions and researchers. Companies like Reducto, which is actively developing advanced PDF parsing solutions, have leveraged this methodology, drawing on expertise from fields like autonomous driving to tackle the "long tail" of unusual challenges presented by PDFs. These challenges can range from documents containing embedded PDFs, legal texts with handwritten annotations, to faxes with scrawled notes and diagrams connecting disparate pieces of information across a page.

The sheer volume of PDF data available for training AI models is also driving innovation. Hugging Face, a prominent open-source AI platform, discovered that their extensive web crawls contained billions of PDF files. Recognizing this untapped resource, they developed sophisticated methods to extract trillions of high-quality text tokens from these documents, further enhancing the training datasets for LLMs. This process involved developing techniques to differentiate between easily parsable text-heavy PDFs and more complex ones laden with images and charts, subsequently employing specialized models like Reducto’s RolmOCR to process the more challenging documents.

Despite these advancements, the inherent probabilistic nature of language models means that a 100% guarantee of perfect PDF extraction remains elusive. While AI can now achieve remarkable accuracy, often in the high 90th percentile, the remaining few percent of edge cases—unusual formatting, low-quality scans, or highly complex layouts—continue to pose a significant challenge. This is analogous to the challenges faced by self-driving car technology, where ensuring safe operation in all conceivable road conditions requires continuous refinement and robust handling of unforeseen circumstances.

The future outlook for AI’s interaction with PDFs is one of increasing integration and sophistication. As AI developers recognize the immense value contained within PDF archives, investment in specialized parsing technologies is expected to accelerate. The development of more robust and adaptable AI systems capable of understanding the visual and structural nuances of PDFs will unlock new possibilities for data analysis, research, and digital information management. The PDF, far from being an obsolete format, is poised to become a richer source of insights as AI continues to evolve its ability to decipher its complex, yet invaluable, contents. The question of "how many AIs does it take to read a PDF" is rapidly transitioning from a rhetorical curiosity to a tangible engineering problem, with significant implications for the future of artificial intelligence and its capacity to engage with the world’s digital documentary heritage.