In a significant development poised to redefine mobile artificial intelligence, Google, in collaboration with Samsung, has publicly demonstrated sophisticated agentic AI features designed to execute multi-step tasks autonomously, a capability that eludes Apple’s currently unavailable Siri advancements. This strategic rollout, initially targeting the upcoming Pixel 10 series and the recently announced Samsung Galaxy S26, represents a tangible realization of AI functionalities that Apple previewed at its 2024 Worldwide Developers Conference, but subsequently postponed, leaving a notable gap in its product ecosystem.

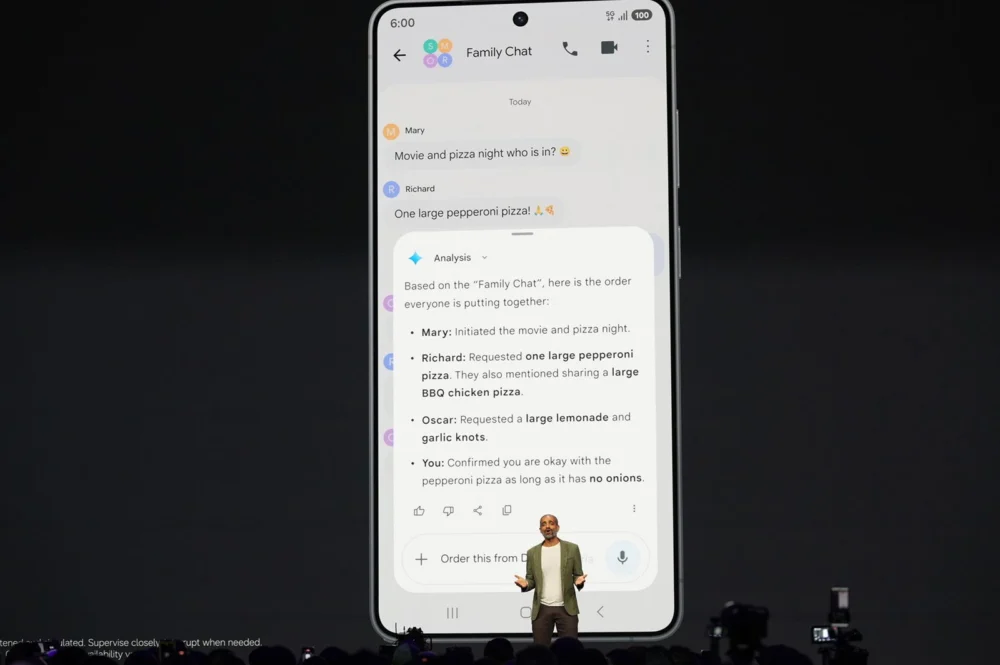

The unveiling occurred during a recent Google presentation where Sameer Samat, President of Android, showcased Gemini’s emergent agentic prowess through a compelling, albeit pre-recorded, demonstration. The scenario depicted Gemini meticulously navigating a family group chat to orchestrate a pizza order. Samat’s interaction involved instructing Gemini to analyze the conversation thread, discern individual preferences, and then initiate the order via a third-party delivery application. The visual display illustrated Gemini’s analytical process, synthesizing the group’s requests into a coherent order. Subsequently, through a voice command, the user authorized Gemini to finalize the order with a specific pizzeria. The demonstration continued with Gemini interfacing with GrubHub, pre-configuring the order, and only requiring user confirmation to submit. Upon completion, Gemini was designed to provide an alert, allowing the user to review and finalize the transaction with a final press of a button.

While the practical complexity of this particular task might be perceived as manageable through direct app interaction or even a phone call, the underlying technological leap is substantial. This advancement signifies a critical step forward in the realm of agentic AI, where software agents can perform complex, multi-stage actions on behalf of users. This move follows Google’s recent integration of Gemini’s auto-browsing capabilities within Chrome, and extending this functionality directly into the Android operating system represents a logical and ambitious progression. Google’s strategic intent appears to position Gemini not merely as a conversational chatbot or a collection of AI models, but as a proactive, intelligent agent and a robust productivity partner.

Should these agentic Gemini features indeed launch in the near future as Google has indicated, and absent any unexpected breakthroughs from Apple, Google will have successfully preempted Apple in delivering some of the most anticipated AI features demonstrated at WWDC 2024. Apple’s own prerecorded presentations had highlighted Siri’s potential to understand on-screen context and execute actions accordingly. For instance, the ability to seamlessly add an address from a message thread to a contact card, or to facilitate cross-application actions, was a key selling point. Furthermore, Apple suggested Siri would possess the capacity to understand personal context, enabling queries such as the landing time of a family member’s flight, with Siri retrieving the necessary information from emails.

However, nearly two years after these announcements, these sophisticated Siri capabilities remain unavailable to users. Apple’s acknowledgment of delays was accompanied by the conspicuous removal of an advertisement that had showcased these very features. Further reporting suggests that some of these much-touted functionalities might not be implemented until iOS 27, indicating a prolonged development and integration cycle.

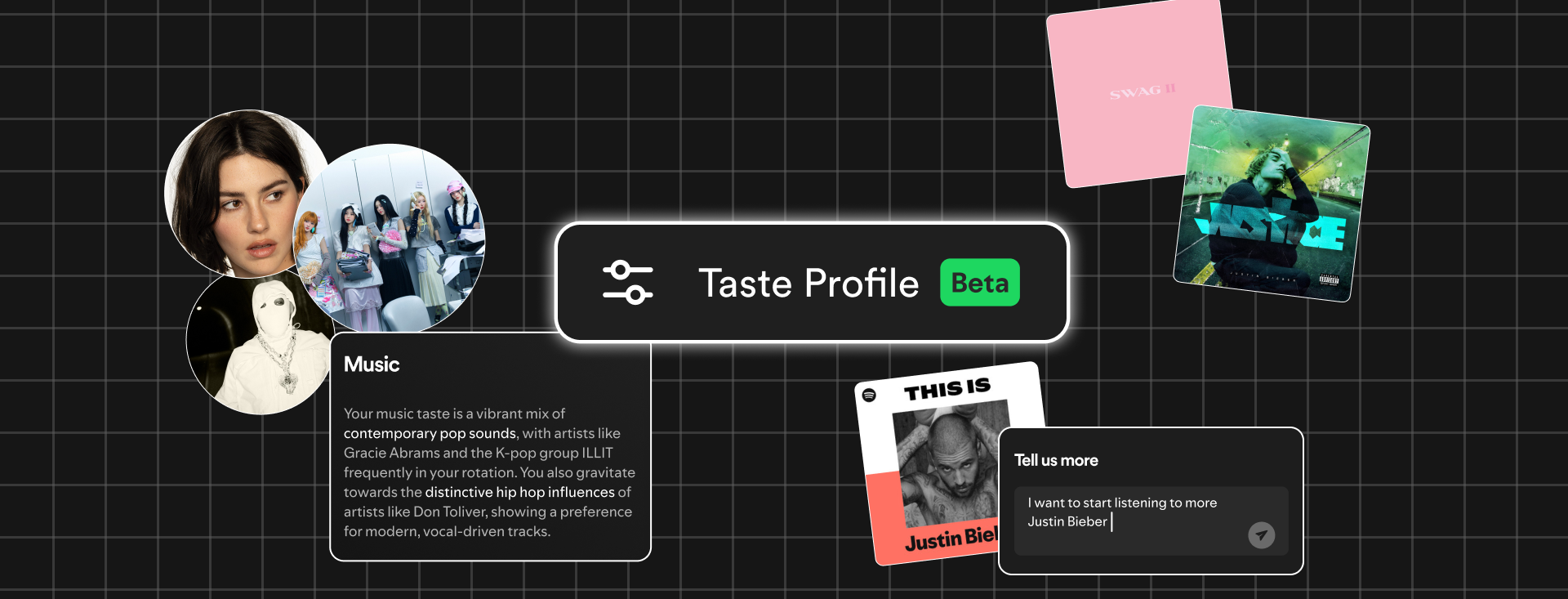

Numerous questions and potential challenges undoubtedly surround the widespread adoption and effectiveness of Gemini’s new agentic capabilities. The successful deployment and real-world functionality will be crucial determinants of their utility. Google has explicitly categorized this initial rollout as a "beta" phase, implying that users may encounter initial imperfections or areas requiring refinement. Moreover, the extent to which third-party developers will grant Gemini the necessary permissions to interact with their applications on behalf of users—a phenomenon often referred to as "the DoorDash problem"—remains an open question. Google has indicated that Gemini’s agentic functions will initially be limited to "select rideshare and food apps," suggesting a phased approach to integration and developer onboarding.

Despite these pending considerations, Google appears to have established a significant lead over Apple in the competitive landscape of mobile AI. This development places Apple in a position where it must accelerate its efforts to bridge the perceived gap and deliver on its previously announced, yet still unrealized, AI ambitions. The industry will be closely observing the actual performance and user experience of Gemini’s agentic features, as well as Apple’s strategic response to this evolving AI paradigm. The successful implementation of these advanced functionalities by Google and Samsung could fundamentally alter user expectations for mobile assistants and pave the way for a new era of proactive, intelligent digital interaction.

The implications of this technological advancement extend far beyond mere convenience. The ability of AI agents to perform multi-step tasks autonomously has the potential to significantly boost productivity, streamline complex workflows, and democratize access to advanced digital services. For instance, in professional settings, agentic AI could automate routine administrative tasks, manage scheduling complexities, and even assist in data analysis, freeing up human capital for more strategic endeavors. In the consumer realm, the possibilities are equally vast, ranging from personalized travel planning and sophisticated financial management to enhanced accessibility for individuals with disabilities. The integration of such capabilities into the core operating systems of smartphones signifies a shift from passive digital tools to active, intelligent partners.

The competitive dynamic between Google and Apple in the AI space has long been a focal point for the technology industry. Apple, traditionally known for its seamless integration of hardware and software and its focus on user privacy, has adopted a more cautious approach to AI deployment, often prioritizing polish and security over rapid feature release. Google, on the other hand, with its deep roots in search and data analytics, has consistently pushed the boundaries of AI innovation, leveraging vast datasets and extensive research and development. This latest move by Google, supported by Samsung’s considerable market influence, suggests a strategic acceleration of its AI agenda, aimed at capturing market share and setting new industry standards.

The "agentic" nature of these AI features is particularly noteworthy. Unlike traditional AI assistants that respond to direct commands, agentic AI is designed to understand goals and then autonomously plan and execute a series of steps to achieve them. This requires a sophisticated understanding of context, intent, and the ability to interact with multiple applications and services. The successful implementation of such agents on a mobile platform is a testament to significant advancements in natural language understanding, reasoning, and task automation. The challenges, however, are manifold. Ensuring the reliability and safety of autonomous actions is paramount, as are the ethical considerations surrounding data privacy and algorithmic bias.

The "DoorDash problem," as it has been colloquially termed, highlights a critical hurdle: developer adoption and platform openness. For agentic AI to function effectively across a broad spectrum of services, applications must be designed to allow such agents to interact with them. This may require new APIs, standardized protocols, and a willingness from developers to grant access to their platforms. The success of Google’s initiative will likely depend not only on its technological prowess but also on its ability to foster an ecosystem that supports and encourages the integration of agentic AI. The current limited scope to "select rideshare and food apps" indicates a pragmatic, phased approach, likely designed to test the waters and build developer confidence.

The delay in Apple’s AI features, while frustrating for consumers and potentially damaging to its competitive positioning, may also be a strategic choice. Apple’s emphasis on user experience and privacy means that any AI feature released must meet exceptionally high standards. The company’s decision to postpone the rollout of its advanced Siri capabilities could be an effort to ensure that when they do arrive, they are robust, secure, and deliver a seamless user experience, aligning with Apple’s brand ethos. However, in the fast-paced world of AI, such delays can create openings for competitors to establish a foothold and shape market expectations.

Looking ahead, the landscape of mobile AI is set to become increasingly dynamic. The successful deployment of agentic AI by Google and Samsung could catalyze a renewed wave of innovation across the industry. Other major players will likely be compelled to accelerate their own AI development roadmaps, leading to a more competitive and rapidly evolving market. The focus will shift from simple voice commands to more sophisticated, goal-oriented interactions, where AI assistants become indispensable partners in navigating the complexities of daily life and work. The true measure of success will lie not only in the technical capabilities demonstrated but in the tangible benefits they deliver to users, fostering trust, enhancing productivity, and ultimately, redefining the relationship between humans and their technology. The coming months and years will undoubtedly be pivotal in shaping the future of artificial intelligence on our most personal devices.