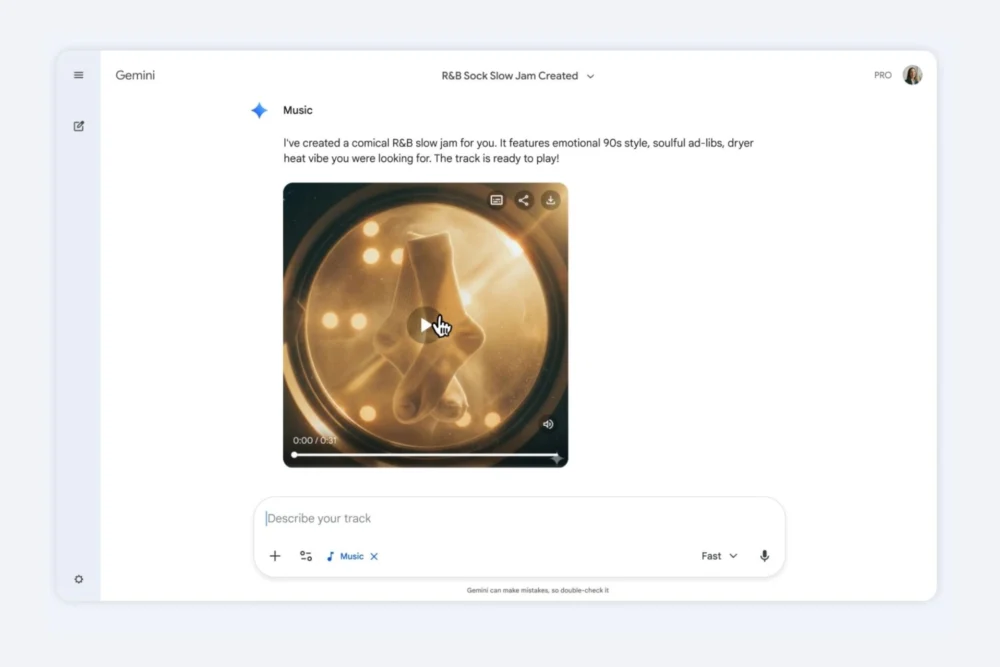

In a significant stride towards democratizing creative expression, Google has integrated advanced AI music generation features into its Gemini conversational AI platform, granting users the ability to craft original musical pieces directly within the chatbot interface. This new functionality, powered by DeepMind’s cutting-edge Lyria 3 audio model, promises to transform how individuals interact with and create music, moving beyond simple text prompts to incorporate visual and emotional cues.

The integration marks a pivotal moment in the evolution of generative AI, shifting its focus from text and image creation to the more complex and nuanced domain of audio. Lyria 3, the engine behind this innovative feature, has been developed with a keen eye on ethical considerations and creative originality. While previous iterations of Lyria were primarily accessible through Google Cloud’s Vertex AI platform, its deployment within the user-friendly Gemini app signifies a deliberate effort to broaden its reach and accessibility to a global audience. This strategic move places Google squarely in the burgeoning field of AI-powered music creation, a landscape that has seen rapid development with notable entrants like TikTok and Microsoft Copilot introducing their own generative music tools.

The core of this new capability lies in Lyria 3’s sophisticated text-to-music generation. Users can now articulate their musical desires through descriptive language, specifying genres, evoking specific moods, or even drawing inspiration from personal memories. For instance, a user might request an "upbeat jazz piece that captures the feeling of a bustling city at dawn," or a "melancholy acoustic ballad reflecting on a past friendship." The AI is designed to interpret these prompts and translate them into original instrumental compositions or songs complete with AI-generated lyrics. This level of intuitive control empowers individuals with no formal musical training to explore their creative visions and bring them to life.

Beyond textual descriptions, Lyria 3’s capabilities extend to multimodal input. Users can upload photographs or video clips, providing visual context that Gemini will then leverage to generate a musical track. This allows for a more holistic approach to creative synthesis, where imagery and sound can be intertwined to produce a cohesive artistic output. The AI analyzes the visual elements – colors, themes, movement, and overall aesthetic – to inform the musical composition, aiming to create an auditory experience that resonates with the visual prompt. This fusion of senses opens up novel avenues for storytelling and artistic expression, enabling users to create soundtracks for personal moments, digital art, or even nascent video projects.

Google has emphasized that the primary objective of these AI-generated tracks is not to compete with professional musicians or produce studio-quality masterpieces. Instead, the focus is on providing a fun, accessible, and unique medium for self-expression. This framing acknowledges the current limitations of AI in replicating the depth of human emotion and artistic intent found in professional music, while highlighting its potential as a powerful tool for personal exploration and creative experimentation. The aim is to lower the barrier to entry for musical creation, making it an engaging pastime for a broader demographic.

To further enhance the shareability and presentation of these AI-generated musical creations, Gemini will automatically append custom cover art. This visual component, generated by Google’s Nano Banana AI, is designed to complement the musical piece and provide a polished presentation, making the tracks more appealing for sharing across social media platforms or for personal archiving. This integrated approach to content creation, encompassing both audio and visual elements, streamlines the workflow for users and adds a professional touch to their AI-assisted endeavors.

The impact of Lyria 3 extends beyond the Gemini app, with Google also integrating its capabilities into YouTube’s Dream Track tool. This feature empowers YouTube creators to generate custom AI-generated soundtracks for their Shorts, offering a quick and efficient way to enhance their short-form video content with original music. This move is particularly significant for the creator economy, providing a valuable resource for aspiring and established content creators seeking to differentiate their work and engage their audiences with unique audio experiences.

The development of Lyria 3 has been accompanied by a strong emphasis on addressing the complex issue of copyright and intellectual property within the realm of AI-generated music. Google has stated that it has been "very mindful" of these concerns throughout the development process. The tool is explicitly designed to foster "original expression" rather than to directly mimic existing artists. When a user prompts Gemini for a track that might resemble a particular artist’s style or mood, the AI is programmed to generate a piece that shares a "similar style or mood" while employing filters to ensure that the output does not infringe upon existing copyrighted material. This nuanced approach aims to balance creative freedom with legal and ethical compliance, navigating the challenging terrain of AI-generated artistic output.

Lyria, as a foundational technology, has been in development since 2023, but its previous accessibility was largely confined to the enterprise-level Google Cloud Vertex platform. This expansion into the Gemini app represents a significant democratization of the technology, bringing its powerful generative capabilities to a much wider consumer base. While this launch positions Google to capitalize on the growing interest in AI music, it arrives at a time when competitors have already established a presence in this rapidly evolving market. Platforms like TikTok and Microsoft Copilot have been active in introducing their own AI music creation tools, indicating a broader industry trend towards integrating such functionalities into mainstream applications.

The implications of this development are far-reaching. For individuals, it offers a novel avenue for personal expression, entertainment, and even therapeutic exploration through music. For content creators, it provides a powerful and accessible tool to enhance their work and stand out in a crowded digital landscape. For the music industry, it raises questions about the future of music creation, distribution, and copyright, prompting a re-evaluation of traditional models in light of emerging AI technologies.

Looking ahead, the expansion of Lyria 3’s capabilities within Gemini and its integration into other Google products suggest a commitment to further developing AI as a creative partner. The iterative nature of AI development implies that future versions will likely offer even more sophisticated control, a wider range of musical styles, and potentially more advanced features, such as real-time collaboration or the ability to generate longer-form compositions. The ongoing dialogue around AI ethics, copyright, and artistic authenticity will undoubtedly shape the trajectory of these advancements, guiding the development towards responsible and beneficial applications of generative music technology. The journey of AI in music is still in its nascent stages, but with initiatives like Google’s Lyria 3, the future promises a more accessible, diverse, and creatively empowering musical landscape for all.